Table of Contents

- What Is Monte Carlo Simulation in Radiotherapy?

- Historical Origins: From Nuclear Weapons to Cancer Treatment

- Monte Carlo Radiotherapy Dose Calculation: Core Principles

- Variance Reduction: Making Monte Carlo Clinically Viable

- Source Modelling for Photon and Electron Beams

- Brachytherapy and Ion Beam Modelling

- Dynamic Beam Delivery and 4D Monte Carlo

- Clinical Photon Dose Calculation with Monte Carlo

- Patient Dose Calculation: Uncertainties and Practical Methods

- Electrons, Protons, and Advanced Clinical Applications

- Monte Carlo–Based Quality Assurance

- Artificial Intelligence and the Future of Monte Carlo

- Book Structure and Dedicated Articles

- Quick-Reference Comparison Tables

- Why Monte Carlo Matters for Modern Radiotherapy

Monte Carlo simulation is the gold standard for radiation dose calculation in radiotherapy. Unlike deterministic algorithms that approximate particle transport through analytical shortcuts, Monte Carlo methods track individual particle histories — photons, electrons, protons, and heavier ions — through patient anatomy, sampling physical interaction probabilities at every step. The result is unmatched accuracy in heterogeneous tissues, small fields, and complex geometries where conventional algorithms falter. This comprehensive guide distills the essential knowledge from Monte Carlo Techniques in Radiation Therapy (2nd edition, CRC Press, 2022), edited by Frank Verhaegen and Joao Seco, and links to ten dedicated articles that explore each topic in depth.

Whether you are a medical physicist commissioning a Monte Carlo treatment planning system, a dosimetry researcher benchmarking simulations against measurement, or a radiation oncology resident trying to grasp why your Eclipse plan differs from a Monaco recalculation, this guide provides the conceptual framework you need. We will move from the mathematical foundations through source modelling, patient dose calculation, and emerging AI-driven acceleration techniques — all grounded in peer-reviewed physics, not hype.

What Is Monte Carlo Simulation in Radiotherapy?

Monte Carlo simulation solves the Boltzmann transport equation stochastically by tracking a large number of individual particle histories through matter. Each particle undergoes random interactions — Compton scattering, photoelectric absorption, pair production, bremsstrahlung, Coulomb scattering — sampled from known cross-section libraries, and the energy deposited at each point accumulates into a three-dimensional dose distribution.

In radiotherapy, the “matter” is a patient represented as a voxelized CT dataset, where each voxel is assigned material properties (density, elemental composition) derived from Hounsfield units. A linear accelerator or brachytherapy source generates a particle fluence that enters this voxelized geometry. The Monte Carlo code then simulates millions to billions of particle histories, recording energy deposition per voxel. As the number of histories increases, the statistical uncertainty in each voxel decreases proportionally to $1/\sqrt{N}$, where $N$ is the number of histories.

This stochastic approach captures physics that deterministic algorithms cannot handle well: lateral electronic disequilibrium in small fields, backscatter from high-Z implants, dose build-up and perturbation near tissue-bone-air interfaces, and the complex scatter conditions inside a multileaf collimator (MLC). For these reasons, Monte Carlo has become the reference standard against which all other dose calculation algorithms are benchmarked.

For a thorough treatment of the physical principles and random sampling techniques, see our dedicated article on Monte Carlo Fundamentals in Radiotherapy.

Historical Origins: From Nuclear Weapons to Cancer Treatment

The Monte Carlo method was born during the Manhattan Project in the 1940s, when Stanislaw Ulam, John von Neumann, and Nicholas Metropolis at Los Alamos National Laboratory needed to solve neutron diffusion problems that resisted analytical solution. The challenge was predicting how neutrons would scatter, slow down, and be absorbed as they traversed layers of fissile and shielding material — a problem with too many variables for closed-form mathematics. Ulam, recovering from an illness and playing solitaire, realized that random sampling could estimate probabilities far more efficiently than exhaustive enumeration. Von Neumann formalized the approach for the ENIAC computer, designing sampling algorithms that could exploit the machine’s limited arithmetic capabilities. Metropolis named the method “Monte Carlo” after the famous casino in Monaco — a nod to the central role of chance.

Medical physics adopted Monte Carlo gradually. Early applications in the 1960s and 1970s focused on dosimetry problems: calculating depth-dose curves for photon beams, characterizing ion chamber response, and studying backscatter factors. The development of the EGS (Electron Gamma Shower) code system at the Stanford Linear Accelerator Center (SLAC) in the late 1970s was a turning point. EGS provided a general-purpose coupled photon-electron transport code that physicists could adapt for medical applications. Its successor, EGSnrc, developed at the National Research Council of Canada, remains one of the most widely validated codes in dosimetry research.

Other major codes followed: MCNP from Los Alamos, PENELOPE from the University of Barcelona, Geant4 from CERN, and FLUKA from CERN/INFN. Each brought distinct strengths — PENELOPE’s detailed low-energy electron transport, Geant4’s modular C++ architecture, FLUKA’s robust hadron physics. The BEAMnrc code, a user-friendly EGSnrc front-end, became the de facto standard for modelling clinical linear accelerators.

The shift from research curiosity to clinical tool happened in the 2000s when computing power finally made Monte Carlo feasible for routine treatment planning. Moore’s law, combined with clever algorithmic optimizations like VMC++ and XVMC, reduced calculation times from hours to minutes. Commercial systems — Elekta’s Monaco (based on VMC++ and XVMC), Varian’s AcurosXB (a deterministic linear Boltzmann solver benchmarked extensively against MC), and RayStation (with direct MC capability for protons) — brought Monte Carlo accuracy to the everyday clinical planning workflow. The parallel development of TOPAS, a user-friendly Geant4 wrapper from Massachusetts General Hospital and SLAC, lowered the barrier for research institutions seeking to run proton therapy simulations without the steep learning curve of raw Geant4 programming.

Monte Carlo Radiotherapy Dose Calculation: Core Principles

Three foundations support every Monte Carlo transport simulation: random number generation, cross-section data, and particle transport algorithms. Understanding each is essential before diving into clinical applications.

Random Number Sampling

Monte Carlo codes rely on pseudo-random number generators (PRNGs) to sample probability distributions governing particle interactions. Modern codes use generators with periods exceeding $2^{64}$, ensuring that correlations between histories do not bias results. The fundamental operation is the inversion method: given a cumulative distribution function $F(x)$, a uniformly distributed random number $\xi \in [0,1)$ yields a sampled value $x = F^{-1}(\xi)$. Rejection techniques handle distributions where inversion is impractical.

Cross Sections and Interaction Physics

Photon interactions are relatively straightforward to simulate because each event (Compton, photoelectric, pair production, Rayleigh) can be treated individually. The mean free path between interactions is:

$$\lambda = \frac{1}{\mu_{\text{total}}}$$

where $\mu_{\text{total}}$ is the sum of all macroscopic cross sections at the current photon energy. A random number determines the distance to the next interaction, and another determines which interaction type occurs, weighted by relative cross sections.

Condensed History for Charged Particles

Electrons (and positrons) undergo millions of Coulomb interactions per centimetre of tissue. Simulating each individually — the so-called “analog” approach — is computationally prohibitive. The condensed history (CH) technique, introduced by Berger in the 1960s, groups many small interactions into single large steps. Energy loss is sampled from the Landau or Vavilov distribution along each step, and angular deflection comes from multiple-scattering theories (Molière, Goudsmit-Saunderson). Class I CH algorithms (e.g., early versions of EGS) group all interactions; Class II algorithms (EGSnrc, PENELOPE) explicitly sample “catastrophic” hard collisions above a threshold while grouping the rest.

Boundary crossing algorithms are critical in medical physics. When a condensed-history step crosses a material boundary (e.g., soft tissue to bone), the step must be broken at the boundary and restarted with the new material’s properties. Inaccurate boundary crossing causes dose artifacts at interfaces — precisely the locations where Monte Carlo accuracy matters most.

For a deeper dive into transport algorithms and numerical integration methods, consult our article on Monte Carlo Fundamentals in Radiotherapy.

Variance Reduction: Making Monte Carlo Clinically Viable

Raw (analog) Monte Carlo simulation converges too slowly for clinical use. Variance reduction techniques (VRTs) accelerate convergence without introducing bias, reducing computation time by factors of 10 to 1000.

Classical Techniques

| Technique | Mechanism | Typical Efficiency Gain | Primary Application |

|---|---|---|---|

| Particle Splitting | Divide particle into N sub-particles with weight 1/N at a geometry boundary | 5–20× | Deep-seated tumours, shielded regions |

| Russian Roulette | Kill low-importance particles probabilistically; survivors gain weight | 2–10× | Eliminating particles heading away from scoring region |

| Range Rejection | Terminate charged particles whose residual range cannot reach the scoring region | 2–5× | Electron beam calculations |

| Interaction Forcing | Force rare interactions (e.g., bremsstrahlung) to occur more frequently, adjusting weight | 5–50× | Detector response, thin-target simulations |

| Exponential Transform | Bias step-length sampling toward a preferred direction | 2–10× | Deep-penetration shielding problems |

| Woodcock (Delta) Tracking | Use maximum cross section across all regions; virtual interactions fill the gap | Variable | Complex heterogeneous geometries |

Advanced Techniques for Radiotherapy

Directional bremsstrahlung splitting (DBS) revolutionized LINAC head simulation. When a bremsstrahlung photon is produced in the target, the code generates multiple copies directed toward the treatment field, each with reduced statistical weight. This can improve efficiency by two orders of magnitude for photon beam simulations.

History repetition (also called particle recycling) reuses phase-space particles from the LINAC simulation multiple times in the patient geometry. While this introduces latent variance — correlations between recycled histories — the trade-off is often acceptable when the phase-space file contains several hundred million particles.

Macro Monte Carlo (MMC) pre-computes electron transport kernels in spherical geometries and looks them up during patient simulation, avoiding the expensive step-by-step condensed-history calculation. VMC (Voxel Monte Carlo) and its descendants (VMC++, XVMC) exploit the regular voxel geometry of patient CT data for fast boundary crossing, achieving sub-minute calculation times for photon beams. These speed-optimized codes underpin commercial treatment planning systems like Elekta Monaco.

For full details on splitting, Russian Roulette, and advanced acceleration schemes, see our dedicated article on Monte Carlo Fundamentals in Radiotherapy.

Source Modelling for Photon and Electron Beams

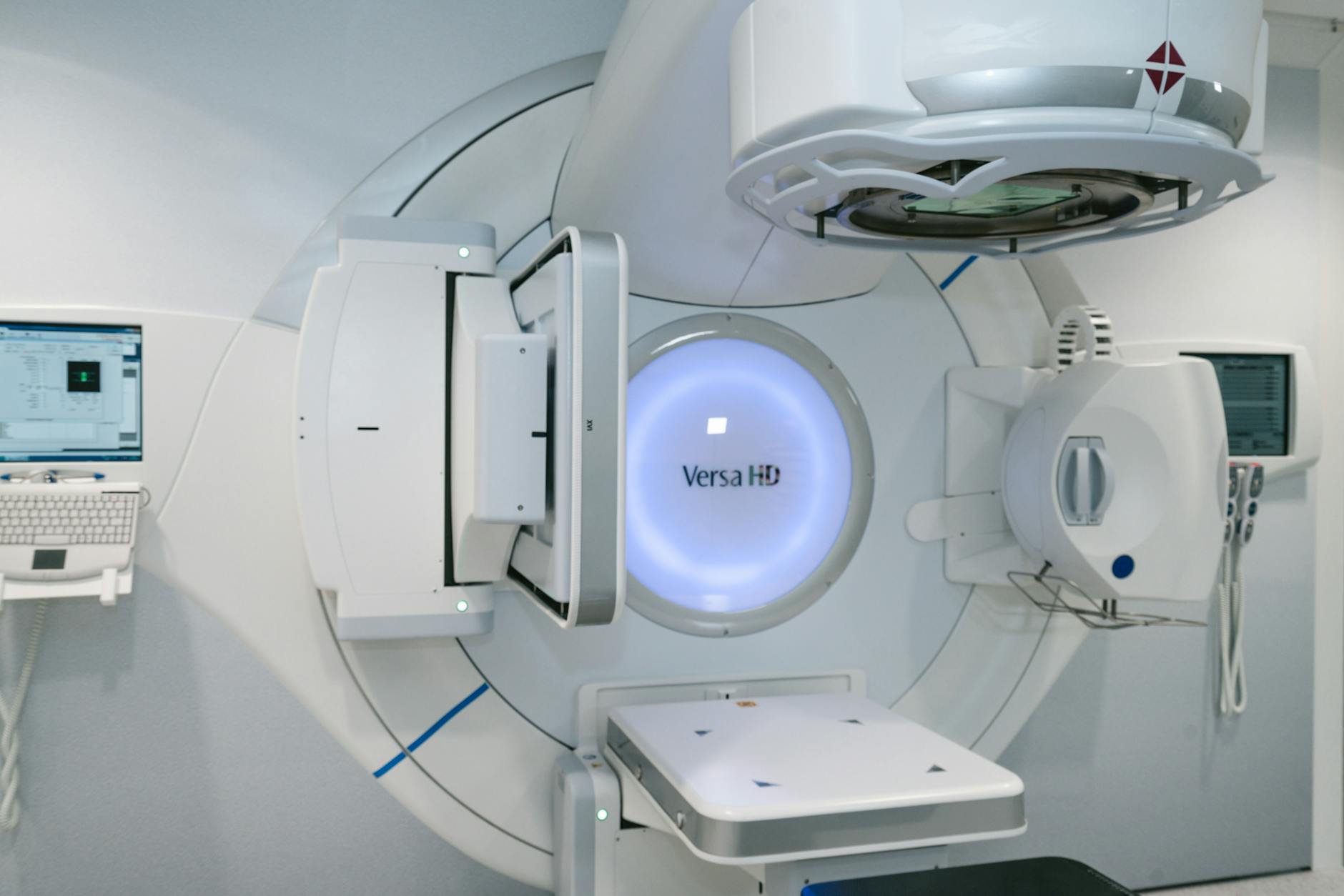

Accurate dose calculation begins with an accurate source model. Monte Carlo simulation of a clinical linear accelerator typically proceeds in two stages: first, a detailed simulation of the LINAC treatment head, and second, transport of the resulting particle fluence through the patient.

LINAC Head Simulation

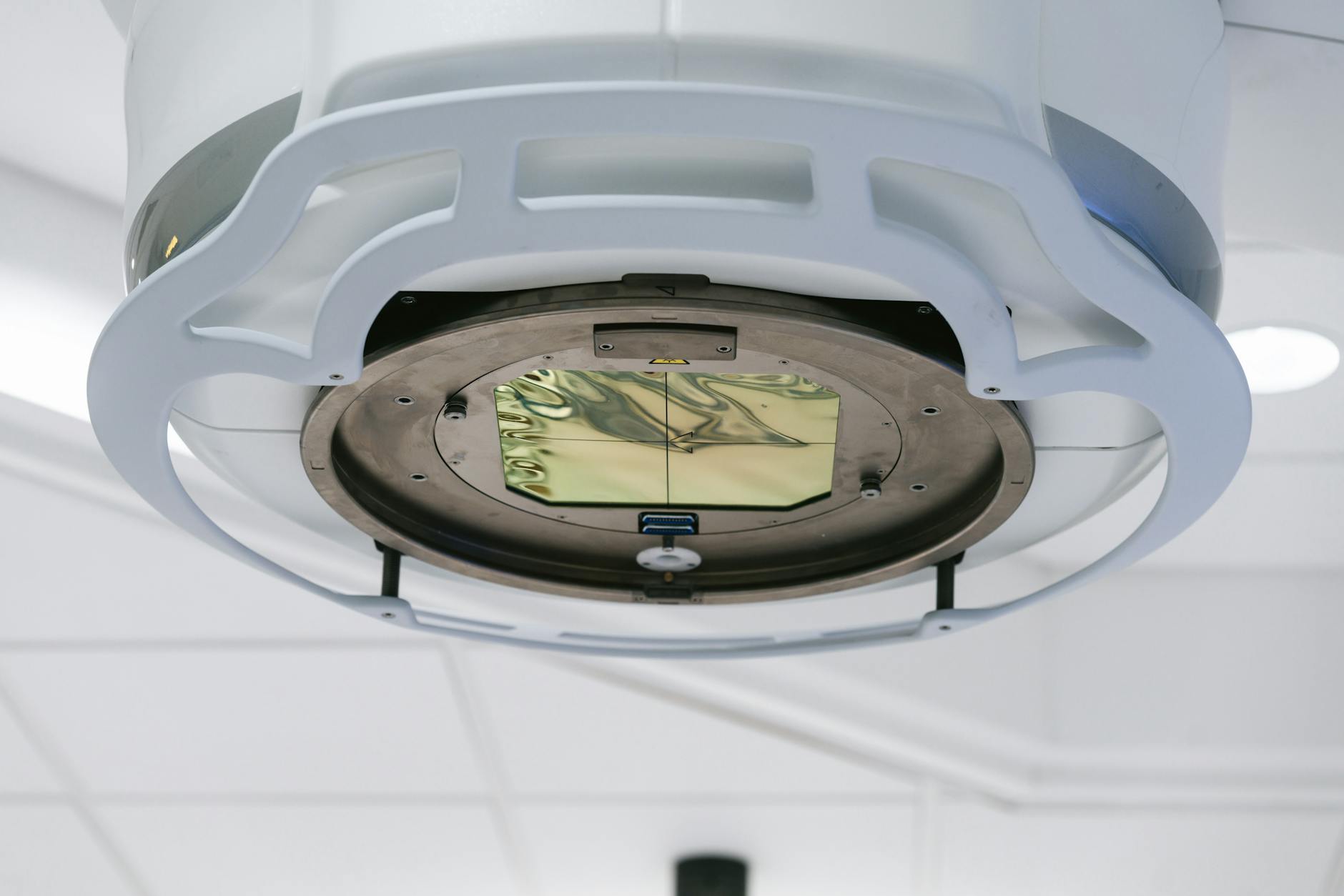

The treatment head of a medical LINAC contains several components that shape the beam: the electron gun, accelerating waveguide, bending magnets, primary collimator, X-ray target (for photon beams), flattening filter (or lack thereof, in FFF beams), monitor chamber, secondary collimators (jaws), and the MLC. Monte Carlo codes like BEAMnrc model each component as a separate geometric module, using manufacturer-provided blueprints and material specifications.

The incident electron beam parameters — mean energy, energy spread, spatial distribution, and angular divergence — are typically tuned to match measured dosimetric data. A common commissioning approach adjusts these parameters until percent depth-dose curves and lateral profiles in water agree with ion chamber measurements within 1%/1mm.

Phase-Space vs. Source Models

| Feature | Phase-Space File | Source Model |

|---|---|---|

| Storage | Tens of GB per field | Kilobytes of parameters |

| Field-size flexibility | Fixed per simulation | Arbitrary fields from single model |

| Accuracy | Exact (limited by statistics) | Depends on model fidelity |

| Particle recycling | Required for adequate statistics | Not needed (infinite sampling) |

| MLC modelling | Included in file | Requires separate transport or attenuation model |

Phase-space files record every particle’s position, direction, energy, and statistical weight on a scoring plane below the LINAC head. They serve as a “snapshot” of the beam that can be reused for multiple patient simulations. The downside is file size and the need for particle recycling.

Parametric source models (e.g., multiple-source models) represent the LINAC output as a superposition of components: a primary point source from the target, an extra-focal source from the flattening filter or primary collimator scatter, and an electron contamination source. These models are compact and flexible, but their accuracy depends on careful fitting to measured beam data.

Stereotactic beams deserve special attention. Small-field dosimetry (fields below 3 cm) pushes both measurement and simulation to their limits. Monte Carlo plays a dual role: as the reference calculation for small-field output factors, and as the planning algorithm for stereotactic radiosurgery. See our related article on Monte Carlo MU Calculation for how monitor unit calculations are performed under these conditions.

Electron beam modelling follows similar principles but with a simpler head geometry (scattering foils or scanning magnets replace the photon target and flattening filter). The BEAM code was originally developed precisely for electron beam simulation, and its applicator/cutout modelling capabilities remain highly relevant. Our dedicated article on MC Modelling of External Photon Beams and the companion piece on Electron Beams and Brachytherapy Source Modelling expand on these topics.

Brachytherapy and Ion Beam Modelling

Monte Carlo serves as the reference dosimetry method for brachytherapy sources and is increasingly critical for ion beam therapy planning. Both domains present unique modelling challenges that deterministic algorithms handle poorly.

Brachytherapy Source Modelling

The TG-43 formalism, long the standard for brachytherapy dose calculation, relies on dose-rate tables derived from Monte Carlo simulation of individual sources in water. Monte Carlo codes compute dose-rate constants, radial dose functions, and anisotropy functions for each source design by simulating photon transport from the radioactive core through the source encapsulation and into a water phantom. These data populate the consensus datasets published by AAPM working groups.

Beyond TG-43, model-based dose calculation algorithms (MBDCAs) use Monte Carlo or deterministic transport (e.g., collapsed-cone, grid-based Boltzmann solvers) to account for tissue heterogeneities, applicator attenuation, and inter-source shielding. These effects can alter dose distributions by 5–15% near tissue-applicator boundaries compared to TG-43 — clinically relevant for vaginal cuff treatments, shielded ovoids, and eye plaques.

Ion Beam (Proton and Heavy Ion) Modelling

Ion beam therapy introduces nuclear interaction physics absent from photon calculations. When a proton traverses tissue, it may undergo elastic or inelastic nuclear collisions producing secondary protons, neutrons, alpha particles, and heavier fragments. Monte Carlo codes must employ accurate nuclear interaction models — the Bertini intranuclear cascade, binary cascade, or quantum molecular dynamics models in Geant4 — to predict these secondaries correctly.

For pencil beam scanning (PBS) delivery, each individual spot must be simulated. A full treatment plan may contain thousands of spots across multiple energy layers, making computational efficiency paramount. The nozzle geometry (range modulator wheel, range shifter, scanning magnets, ionization chambers) must be modelled to capture the beam’s Bragg peak shape and lateral penumbra accurately.

A critical issue in proton therapy Monte Carlo is the choice between dose-to-water ($D_w$) and dose-to-medium ($D_m$). Analytical pencil-beam algorithms report $D_w$ by convention, but Monte Carlo naturally scores $D_m$. In bone, the difference can exceed 10%. The clinical community has not reached consensus on which quantity to prescribe, making this an active area of research and debate. Explore these topics further in our articles on Electron Beams and Brachytherapy Source Modelling and Ion Beams and Treatment Device Design with MC.

Dynamic Beam Delivery and 4D Monte Carlo

Modern radiotherapy rarely uses static fields. IMRT, VMAT, tomotherapy, and scanned proton beams all involve dynamic beam delivery where the aperture, intensity, or energy changes continuously during irradiation. Monte Carlo simulation of these techniques requires synchronizing particle transport with the time-dependent machine state.

IMRT and VMAT Simulation

For step-and-shoot IMRT, each segment can be simulated independently and the resulting dose distributions summed, weighted by monitor units. For sliding-window IMRT and VMAT, the continuously moving MLC leaves must be discretized into time intervals. The accuracy of the simulation depends on temporal resolution: too coarse, and the dose in high-gradient regions near leaf edges becomes inaccurate.

VMAT adds gantry rotation to the problem. A typical VMAT arc is divided into control points (usually 2–4 degrees apart), and Monte Carlo simulates each sub-arc as a static field at the mean gantry angle. Interpolation between control points for MLC positions and dose rates must be handled carefully.

4D Monte Carlo and Patient Motion

Respiratory motion during lung and liver treatments causes interplay effects between the moving tumour and the dynamic MLC or scanning beam. Four-dimensional Monte Carlo (4D MC) addresses this by simulating dose delivery across multiple breathing phases, then accumulating dose on a reference phase using deformable image registration (DIR).

The 4D MC workflow involves: (1) acquire a 4D CT scan (10 breathing phases); (2) generate deformation vector fields mapping each phase to the reference phase; (3) simulate dose on each phase geometry with the appropriate beam aperture and intensity for that time interval; (4) warp each phase’s dose distribution to the reference phase using the deformation vectors; (5) sum the warped doses. This voxel-warping approach captures interplay effects that static 3D calculations miss entirely.

Dose mapping accuracy depends heavily on the deformable registration quality, particularly near sliding interfaces (e.g., lung-chest wall). Errors in the deformation field propagate directly into the accumulated dose. Research into biomechanical DIR models constrained by physical plausibility aims to mitigate these artifacts. For complete coverage of dynamic delivery simulation, see our article on Dynamic Beam Delivery and 4D Monte Carlo.

Clinical Photon Dose Calculation with Monte Carlo

Monte Carlo treatment planning for photon beams is now commercially available and increasingly adopted, especially for challenging clinical scenarios. Several commercial systems offer MC or MC-quality dose engines.

| System | Algorithm | Type | Typical Calc Time (VMAT) |

|---|---|---|---|

| Elekta Monaco | XVMC / VMC++ | Full MC | 2–8 min |

| Varian Eclipse (AcurosXB) | Linear Boltzmann Transport (GBBS) | Deterministic (MC-benchmarked) | 1–5 min |

| RayStation | Collapsed Cone + MC (photons/protons) | Full MC available | 3–10 min |

| Accuray Precision | MC (CyberKnife, TomoTherapy) | Full MC | 5–20 min |

Commissioning a Monte Carlo TPS requires the same beam data as any dose algorithm — depth-dose curves, profiles, output factors — but the physicist must also validate the system’s underlying transport parameters. Because Monte Carlo is inherently stochastic, the TPS must report statistical uncertainty per voxel, and the physicist must understand how to interpret noisy dose distributions. A 2% per-voxel uncertainty, common in routine clinical calculations, creates dose-volume histogram (DVH) uncertainty that can mask clinically meaningful differences.

Where Monte Carlo truly earns its place is in heterogeneous geometries. Lung treatments (low-density tissue surrounding the tumour), head-and-neck plans (air cavities, bone, dental implants), and breast treatments with tissue-expander implants all benefit from Monte Carlo’s first-principles physics. Studies consistently show that pencil-beam convolution algorithms overestimate target coverage in lung by 3–8% compared to Monte Carlo, potentially leading to underdosing. For more on the independent verification of clinical dose calculations, see our article on Second Dose Calculation System for Treatment Verification. Our dedicated article on Photons: Clinical Considerations and Applications covers commissioning workflows and clinical case studies.

Patient Dose Calculation: Uncertainties and Practical Methods

Translating Monte Carlo simulation into clinically usable patient dose distributions requires addressing several practical challenges: CT-to-material conversion, statistical noise, and computation time.

CT Number to Material Conversion

Patient CT data must be converted into material assignments for Monte Carlo transport. The standard approach uses a piecewise-linear calibration curve mapping Hounsfield units to mass density, combined with a tissue segmentation scheme that assigns elemental compositions. Errors in this conversion — particularly for high-Z materials like hip prostheses or dental fillings — directly affect dose accuracy. Dual-energy CT (DECT) improves material identification by providing additional information about effective atomic number.

Scoring Dose: Dosels and Resolution

The Monte Carlo scoring grid (voxels, sometimes called “dosels” for dose elements) does not need to match the CT resolution. Coarser scoring grids reduce statistical noise but may miss steep dose gradients. A common clinical choice is 2–3 mm isotropic scoring resolution, with finer grids (1 mm) for stereotactic applications. The relationship between statistical uncertainty and scoring volume is:

$$\sigma_D \propto \frac{1}{\sqrt{N \cdot V}}$$

where $V$ is the voxel volume and $N$ is the number of histories. Larger voxels accumulate more interactions, reducing statistical noise for the same number of histories.

Denoising Techniques

Statistical noise is the Achilles’ heel of clinical Monte Carlo. Running more histories reduces noise but increases computation time. Denoising algorithms offer an alternative: calculate with moderate statistics, then apply a filter to smooth the noise while preserving dose gradients. Techniques range from simple Savitzky-Golay filters to adaptive wavelet thresholding and, more recently, deep learning–based denoisers trained on high-statistics reference distributions.

The kugel method takes a different approach entirely: pre-computing electron transport through small spherical geometries and using these as lookup tables during patient simulation. This can speed up electron transport by orders of magnitude with minimal accuracy loss. More details on these methods appear in our article on Patient Dose Calculation and Electron Applications.

Electrons, Protons, and Advanced Clinical Applications

Monte Carlo simulation is particularly valuable for particle therapies where the physics of dose deposition differs fundamentally from conventional photon treatments.

Electron Beam Therapy

Conventional electron beam algorithms (pencil-beam models) struggle with lateral scatter in heterogeneous anatomy. When an electron beam passes through an air cavity — for instance, in the nasal passages or paranasal sinuses — the beam broadens dramatically due to reduced scattering, then converges again upon re-entering tissue. Pencil-beam models, which assume a Gaussian lateral spread, cannot reproduce this behaviour. Monte Carlo captures the characteristic broadening of electron beams behind air cavities and the increased surface dose downstream of bone with full physical fidelity. For modulated electron radiation therapy (MERT) — an emerging technique using electron IMRT with energy and intensity modulation — Monte Carlo is essentially mandatory. The complex field shapes, mixed energies, and steep dose gradients at field junctions overwhelm analytical algorithms that were designed for simpler, single-energy, single-field geometries.

Proton Therapy

| Parameter | Analytical Pencil Beam | Monte Carlo |

|---|---|---|

| Heterogeneity handling | Water-equivalent path length scaling | Full nuclear and electromagnetic transport |

| Nuclear interactions | Parameterized correction | Explicit nuclear models |

| Range uncertainty | ±3.5% + 1 mm (clinical margin) | Potentially reduced to ±2.5% + 1 mm |

| Dose-to-water vs. dose-to-medium | Reports $D_w$ by convention | Naturally scores $D_m$; $D_w$ via Bragg-Gray conversion |

| Secondary neutron dose | Not calculated | Included via nuclear models |

| Computation time | Seconds | Minutes to hours (improving with GPU) |

In proton therapy, Monte Carlo’s greatest value lies in reducing range uncertainty. The Bragg peak’s sharp distal falloff means that a few millimetres of range error can shift the high-dose region from tumour into critical normal tissue. Monte Carlo, by modelling the actual nuclear and electromagnetic physics rather than relying on water-equivalent scaling, provides more accurate predictions of where the Bragg peak falls in heterogeneous anatomy — particularly near bone, air cavities, and metallic implants.

Total skin electron therapy (TSET) presents another niche where Monte Carlo excels. The large treatment distances, angled beams, and curved patient surface make analytical dosimetry unreliable. Monte Carlo simulation of the TSET setup — including room scatter, beam spoilers, and patient self-shielding — provides the most accurate dose distributions available.

Detailed treatment of these clinical applications appears in our articles on Protons and Advanced QA with Monte Carlo and TSET and Patient Modelling in Brachytherapy.

Monte Carlo–Based Quality Assurance

Monte Carlo provides a powerful independent check on treatment planning system calculations. As an independent dose verification tool, MC can detect systematic errors in TPS commissioning, algorithm limitations, and plan-specific dosimetric issues that measurement-based QA might miss.

The concept is straightforward: recalculate the patient plan using a Monte Carlo engine completely independent of the TPS, then compare the two dose distributions using gamma analysis or dose-difference criteria. Gamma evaluation combines dose-difference and distance-to-agreement metrics into a single pass/fail index. Discrepancies exceeding clinical tolerances (typically 3%/2mm for IMRT/VMAT, or 2%/2mm for stereotactic plans) trigger investigation. The gamma passing rate — the percentage of voxels meeting criteria — serves as the primary quality metric.

Several research groups and commercial vendors have developed MC-based secondary check systems. These range from full EGSnrc/BEAMnrc recalculations (research) to fast GPU-accelerated MC engines integrated into QA software. The advantage over measurement-based QA (ion chamber arrays, film, EPID dosimetry) is that Monte Carlo checks the entire 3D dose distribution in the patient geometry, not a surrogate phantom geometry.

For patient-specific QA in IMRT and VMAT, Monte Carlo can detect MLC modelling errors, small-field output factor discrepancies, and heterogeneity calculation failures that may not manifest in phantom measurements. The trend toward replacing or supplementing measurement-based QA with calculation-based QA is growing, supported by AAPM Task Group reports. This topic is covered in depth in our article on Protons and Advanced QA with Monte Carlo, while practical aspects of monitor unit verification are discussed in our article on Monitor Unit Calculation.

Artificial Intelligence and the Future of Monte Carlo

Deep learning is poised to transform Monte Carlo simulation from a computationally expensive gold standard into a near-instantaneous clinical tool. Several research directions are converging to make this possible.

Neural Network Dose Engines

Deep neural networks trained on large datasets of Monte Carlo dose distributions can predict dose in seconds rather than minutes or hours. These “learned” dose engines take patient CT data and beam parameters as input and output a 3D dose distribution that approximates the full Monte Carlo result. Architectures based on 3D U-Nets, generative adversarial networks (GANs), and vision transformers have demonstrated agreement with MC within 1–2% for photon and proton beams in published studies.

The challenge is generalization. A network trained on prostate plans may fail for head-and-neck anatomy. Transfer learning, data augmentation, and physics-informed loss functions help, but rigorous clinical validation remains essential before deployment.

GPU-Accelerated Monte Carlo

Graphics processing units (GPUs) offer massive parallelism well-suited to Monte Carlo transport, where thousands of independent particle histories can be simulated simultaneously. GPU-accelerated codes like gDPM, GPUMCD, and the GPU backend in RayStation have demonstrated speed-ups of 50–200× compared to single-threaded CPU codes, bringing full Monte Carlo calculation times below one minute for many clinical scenarios.

MC-Augmented Workflows

The future likely involves hybrid workflows: fast analytical or AI-based dose engines for plan optimization (where many iterations are needed), followed by a final Monte Carlo verification calculation. Adaptive radiotherapy, where plans are recalculated daily based on cone-beam CT or MR images, further drives the need for speed. Real-time Monte Carlo during treatment delivery, while not yet achievable, is a long-term research goal.

Quantum computing represents a more speculative but intriguing possibility. Quantum random number generation and quantum-accelerated sampling could, in principle, accelerate Monte Carlo convergence. Current quantum hardware is far from practical for this application, but theoretical work has begun. Our article on AI, Trends and Future of Monte Carlo in Radiotherapy explores these emerging directions in full.

Book Structure and Dedicated Articles

The second edition of Monte Carlo Techniques in Radiation Therapy organizes 17 chapters into three parts. Below is an overview of the book’s structure and links to our dedicated articles that cover each set of chapters in depth.

| Book Part | Chapters | Key Topics | Detailed Article |

|---|---|---|---|

| Part I: Introduction | Ch 1 | History of Monte Carlo | Monte Carlo Fundamentals |

| Ch 2 | Basics of MC simulation | ||

| Ch 3 | Variance reduction techniques | ||

| Part II: Source Modelling | Ch 4 | External photon beams | MC Modelling of Photon Beams |

| Ch 5–6 | Electron beams, brachytherapy | Electrons & Brachytherapy Modelling | |

| Ch 7 | Scanned ion beams | Ion Beams & Device Design | |

| Ch 8 | Treatment device design | ||

| Ch 9 | Dynamic delivery & 4D MC | Dynamic Delivery & 4D MC | |

| Part III: Patient Dose Calculation | Ch 10 | Photons: clinical considerations | Photons: Clinical Applications |

| Ch 11 | Patient dose calculation | Patient Dose Calc & Electrons | |

| Ch 12 | Electrons: clinical considerations | ||

| Ch 13–14 | Protons clinical, MC-based QA | Protons & Advanced QA | |

| Ch 15–16 | TSET, brachytherapy patient modelling | TSET & Brachytherapy Modelling | |

| Ch 17 | AI and future of MC | AI & Future of MC | |

Each dedicated article provides a deep technical discussion with worked examples, key equations, and clinical relevance. We recommend reading the fundamentals article first, then branching into whichever clinical application area is most relevant to your practice.

Quick-Reference Comparison Tables

The following table summarizes the major general-purpose Monte Carlo codes used in medical physics research and their distinguishing features. Choosing the right code depends on the application: photon beam dosimetry research gravitates toward EGSnrc for its unmatched accuracy in ion chamber simulations, while proton therapy centres increasingly adopt TOPAS or Geant4 for treatment head modelling. FLUKA dominates heavy-ion therapy research due to its well-validated nuclear interaction models, and PENELOPE remains the preferred choice for low-energy applications such as brachytherapy source characterization and diagnostic radiology dosimetry.

| Code | Origin | Language | Primary Strength | Medical Physics Niche |

|---|---|---|---|---|

| EGSnrc / BEAMnrc | NRC Canada | Fortran/C | Dosimetry accuracy | LINAC modelling, ion chamber simulation, reference dosimetry |

| MCNP / MCNP6 | LANL, USA | Fortran | Neutron transport | Shielding, boron neutron capture therapy |

| Geant4 | CERN | C++ | Modular, extensible | Proton/ion therapy, detector design, GATE (PET/SPECT) |

| PENELOPE | U. Barcelona | Fortran | Low-energy electron transport | Brachytherapy, diagnostic radiology, microdosimetry |

| FLUKA | CERN / INFN | Fortran | Hadronic interactions | Heavy-ion therapy, secondary neutrons, radioprotection |

| TOPAS | MGH / SLAC | C++ (Geant4 wrapper) | User-friendly proton therapy | Proton beam modelling, patient QA, educational tool |

Why Monte Carlo Matters for Modern Radiotherapy

The trajectory of Monte Carlo in radiotherapy points in one direction: broader adoption, faster computation, and deeper integration into clinical workflows. Three decades ago, a single Monte Carlo dose calculation could consume days of CPU time. Today, GPU-accelerated codes and commercial TPS implementations deliver MC-quality results in minutes. Tomorrow, AI-augmented engines may reduce this to seconds.

But speed is only part of the story. Monte Carlo matters because it gets the physics right. In lung SBRT, where lateral electronic disequilibrium can cause pencil-beam algorithms to overestimate target dose by 5–10%, Monte Carlo protects patients from underdosing. In proton therapy, where a few millimetres of range uncertainty can mean the difference between tumour control and normal tissue toxicity, Monte Carlo reduces that uncertainty. In brachytherapy, where tissue heterogeneities and applicator shielding invalidate the water-based TG-43 assumptions, Monte Carlo provides the path forward.

For medical physicists, fluency in Monte Carlo principles is no longer optional. Even if you never write a line of EGSnrc or Geant4 code, understanding how your TPS dose engine works — its assumptions, limitations, and failure modes — requires Monte Carlo literacy. The commissioning, validation, and quality assurance of MC-based planning systems demands a grasp of statistical uncertainty, variance reduction, and source modelling that this guide and its ten dedicated articles aim to provide.

The second edition of Monte Carlo Techniques in Radiation Therapy by Verhaegen and Seco remains the most comprehensive single resource on this subject. We have distilled its 17 chapters into this guide and the following dedicated articles, each designed to be read independently while forming a coherent whole:

- Monte Carlo Fundamentals in Radiotherapy — history, random sampling, transport algorithms, variance reduction

- MC Modelling of External Photon Beams — LINAC simulation, phase-space files, source models

- Electron Beams and Brachytherapy Source Modelling — electron beam codes, TG-43, source design

- Ion Beams and Treatment Device Design with MC — proton/ion nozzles, nuclear models, device optimization

- Dynamic Beam Delivery and 4D Monte Carlo — IMRT, VMAT, respiratory motion, dose accumulation

- Photons: Clinical Considerations and Applications — commercial MC TPS, commissioning, heterogeneity corrections

- Patient Dose Calculation and Electron Applications — CT conversion, denoising, kugels, MERT

- Protons and Advanced QA with Monte Carlo — proton MC, dose-to-water vs. dose-to-medium, independent verification

- TSET and Patient Modelling in Brachytherapy — total skin electron therapy, brachytherapy heterogeneity corrections

- AI, Trends and Future of Monte Carlo in Radiotherapy — deep learning dose engines, GPU acceleration, adaptive therapy

Start with the fundamentals, explore the areas most relevant to your clinical practice, and return to the reference tables in this guide whenever you need a quick comparison. Monte Carlo simulation has earned its place as the cornerstone of radiation dose calculation — understanding it will make you a better physicist, a more critical evaluator of treatment plans, and a stronger advocate for patient safety.