What the study found

Over 18 months of real-world deployment, Aidoc’s pulmonary embolism (PE) algorithm helped radiologists catch 26 additional PE cases out of 29,500 CT pulmonary angiograms (CTPA) — a contribution that the authors themselves labeled “selective but meaningful.” The paper, published in Radiology: Artificial Intelligence, is one of the first quantitative looks at how an AI model behaves outside the curated environments in which it is usually trained.

A persistent criticism of radiology AI is the performance drop between bench and bedside — sensitivity and specificity routinely fall by 20 to 30 percentage points once an algorithm leaves the regulatory dataset and meets the noise of emergency, inpatient, and outpatient settings. The researchers set out to measure exactly that delta inside an integrated health network.

Methodology: clinical reality, not curated retrospective

The team analyzed CTPA scans of 29,500 patients acquired between 2021 and 2023. AI processed the images in real time and radiologists then interpreted each exam already aware of the algorithmic call. The cohort included emergency, inpatient, and outpatient cases — a setup that mirrors the daily grind of any full-time PACS operation.

That design matters: earlier studies often evaluated AI in isolation, without the human review loop that exists in practice. As we discussed in our coverage of five questions every imaging director should ask before adopting AI, bench metrics rarely survive a first shift in the reading room.

Key results

The numbers show a setting in which radiologist and AI act as two independent reads — and the union of both outperforms either alone:

- Sensitivity radiologist + AI: 99% vs. AI alone: 85%. The sensitivity gain comes from the human, not the machine.

- Specificity essentially tied (99.8% vs. 99.5%).

- Human–AI agreement: 98%. Higher on negatives (98%) than positives (94%).

- Disagreement in 2.2% of cases. A panel of expert thoracic radiologists sided with the human in 89% of those disagreements.

- Of 3,300 PE-positive exams, 0.81% (26 cases) were caught only by AI.

Why 26 cases matter

At first glance, 26 extra detections in 29,500 exams looks small. But PE carries high acute lethality, and every missed diagnosis translates into immediate clinical risk — and, in the U.S. context, into legal exposure. The authors emphasized this point: AI behaved as a safety net for a small but critical subset of cases that slipped past human attention, not as a substitute for the read.

Recent literature shows similar patterns elsewhere: AI outperformed radiologists in early pancreatic cancer and the Lunit algorithm boosted mammography specificity by 11%. The common thread: AI shines on subtle findings, distraction-laden cases, or those with low clinical pre-test probability.

Implications for clinical practice

Three practical takeaways jump out:

- A negative AI call is more reliable than a positive one. Agreement reaches 98% when the algorithm rules out PE. That signal could help departments prioritize worklists — AI-negative cases drop in queue while AI-positive moves up.

- The radiologist remains the arbiter. In 89% of disagreements, the expert panel sided with the human. Adopting AI is not outsourcing the read.

- Gains do not replace continuous auditing. AI sensitivity dropped to 85%, below Aidoc’s bench results. Without performance monitoring in production, that delta can grow silently.

Context and outlook

The timing is symbolic: Aidoc raised $150 million in a round led by Goldman Sachs and Nvidia just weeks before publication. The capital backs a thesis the market still believes in — radiology AI as an orchestration layer, not a single reader — and the CTPA study lends substance to the pitch.

For radiologists handling growing volumes of chest CTPA (especially in the post-COVID era, where PE has settled into routine differential), the message is clear: integrating AI through the PACS can add a defensive layer, provided that (1) the service is willing to re-review the extra 2% of disagreement cases, (2) governance is in place to track algorithm performance over time, and (3) AI is treated as a second read, not blind triage.

As a limitation, the study was not randomized — radiologists read while already knowing the AI output, which can introduce cognitive bias (anchoring effect). Future blinded studies, where the human read precedes the AI reveal, will help separate genuine gain from a Hawthorne effect. Another open piece is impact analysis on hard endpoints (time-to-anticoagulation, 30-day mortality), which would link the radiologic metric to the clinical outcomes hospital leadership actually cares about.

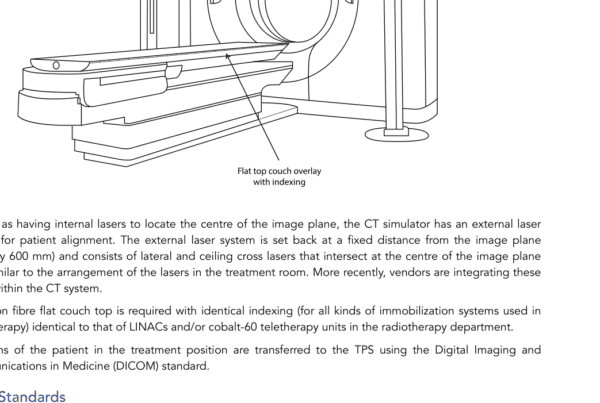

Finally, there is a less-discussed operational layer: the study describes AI running in real time with findings delivered to the radiologist along with the images. Implementing that requires mature integration between the algorithm, modality worklist, and PACS — something many services still struggle with. Without that plumbing, the marginal gain of 26 cases becomes operational noise.

Source: The Imaging Wire — AI for PE Detection: ‘Selective but Meaningful’