When a manufacturer maintains two photon engines within the same TPS, this usually says more about the reality of the clinic than much marketing material. On paper, it’s tempting to summarize the story like this: one engine is the fast one, the other is the precise one. In practice, this reading is shallow. What matters is understanding why the two continue to coexist, in what type of case each one makes sense and what the manufacturer itself accepts as validation limits.

In RayStation, this coexistence appears particularly clearly. The local regulatory documentation of RayStation v2025 SP1 bluntly states that the system operates with two photon dose engines: collapsed cone (CC) and Monte Carlo (MC). This is not redundancy. It is a commercial and clinical decision at the same time, designed to balance speed, flow coverage, physical modeling and methodological responsibility.

If Acuros XB marked the entry of deterministic transport into the Eclipseecosystem, the pair CC/MC of RayStation shows another possible maturity: maintaining a very strong analytical engine as a clinical basis and reserving Monte Carlo for contexts where more explicit physics really pays off.

In this Article

- 1. What RayStation himself says

- 2. The importance of the two engines sharing the same

- 3. What does it mean to use collapsed cone in RayStation

- 4. How RaySearch Validates collapsed cone

- 5. Comparison of CC with others TPS

- 6. Scope of machine and technique: where IFU is more honest than propaganda

- 7. What about Monte Carlo from RayStation?

- 8. Where the IFU itself imposes limits on enthusiasm for MC

- 9. Where CC and MC coexist in an intelligent way

- 10. IFU’s most important warning: dose convention compatibility

- 11. What the CC/MC pair teaches about TPS commercials

- 12. What does a mature service do with this coexistence

What RayStation himself says

The local regulatory text is very clear right from the start: RayStation has two photon dose engines: collapsed cone (CC) and Monte Carlo (MC). This already eliminates a common confusion. The system does not treat Monte Carlo as the only serious form of calculation nor collapsed cone as residual inheritance. The two engines are part of the clinical architecture of the product.

The official white paper from RaySearch helps you understand the role of CC. In it, the clinical photon engine is described as based on classical principles of collapsed cone convolution superposition. The same document summarizes the calculation chain in four steps:

- creep calculation from a multi-source model;

- transport of incident photons through ray tracing through the patient;

- energy redistribution with EGSnrc pre-computed kernels;

- separate calculation of electronic contamination, added to the photon dose.

This description is valuable because it shows that CC of RayStation is not a “lightweight” algorithm in the trivial sense. It is a robust clinical engine, anchored in the collapsed conetradition, with multi-source modeling and Monte Carlo kernels. This explains why it remains so relevant even on a system that also offers Monte Carlo.

The importance of the two engines sharing the same

fluence base Perhaps the most interesting aspect of the RayStation architecture is this: the manufacturer claims that the Monte Carlo photon engine uses the same fluence computation in the head as the collapsed cone.

engine. This greatly changes the way of interpreting divergences between the two. In many TPS, the temptation is to assume that any major difference between engines arises from a completely different chain from the source. In RayStation, at least conceptually, the creep modeling part of LINAC remains aligned. What changes decisively is the physics of deposition in the patient.

This architecture has two useful consequences:

- it reduces the chance that gross differences between CC and MC are attributed solely to incompatible font models;

- it makes the comparison between the two engines more informative about the response of the vehicle and the type of transport used.

This does not eliminate the role of beam model, of course. But it helps to focus the discussion on what the clinician really wants to know: how much of the observed difference arises from the way the dose is calculated within the patient.

What does it mean to use collapsed cone in RayStation

The collapsed cone was born to solve a classic problem: how to keep the physical heart of the convolution/superposition without paying the full cost of a full voxel-by-voxel superposition. Instead of transporting energy in all possible directions at prohibitive cost, the method collapses energy into a finite set of directions, efficiently preserving the total energy.

In RayStation, this philosophy appears coupled to a multi-source model of fluency. In official, the primary source is represented as a spatial elliptical Gaussian; the secondary, as circular Gaussian; and there are still two sources of contaminating electrons. The collimation is modeled in its physical positions, with description of offsets, leaf tips and tongue-and-groove.

This architecture explains an important part of the engine’s clinical performance. CC doesn’t just depend on the kernel. It depends on:

- a physically reasonable description of the head;

- divergent ray tracing through the patient;

- scaling of energy deposition according to local properties;

- explicit sum of the electronic contamination contribution.

In other words, CC of RayStation is far from being a simplified algorithm in the pejorative sense. It is a high-level clinical engine, built to solve most routines with good fidelity and controlled computational cost.

How RaySearch Validates collapsed cone

This is where the local stuff comes in especially strong. The IFU of RayStation v2025 SP1 is not just an algorithmic description; it goes into validation in great detail.

According to the documentation, validation of the collapsed cone photon dose engine included:

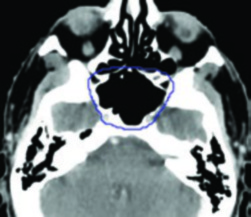

- point doses in homogeneous and heterogeneous phantoms;

- doses in line;

- film;

- detectors like Delta4, MapCheck, ArcCheck, MatriXX, Octavius1500 and PTW 729;

- to IAEA test suite, with cases measured for Elekta machine at 6 MV, 10 MV and 18 MV.

Acceptance criteria are described in terms of gamma, point dose difference and confidence levels. The document states that the overall accuracy was considered acceptable and that the algorithmic limitations identified are addressed in warnings and in the system’s technical reference.

This excerpt is important for two reasons.

The first is that it repositions CC as a clinically serious engine, not as a mere preliminary calculation.

The second is that it shows a correct methodological attitude: validation is presented by scope, by technique and by type of measurement. This is exactly the opposite of the commercial simplification that turns every algorithm into a universal promise.

Comparison of CC with others TPS

The same document states that collapsed cone of RayStation was compared to independent and well-established TPS, including:

- Eclipse (Varian);

- Pinnacle³;

- Monaco (Elekta);

- Oncentra (Elekta);

- Precision (Accuray).

The text also says that, within the acceptance criteria used, the engine calculations could be considered equivalent to the clinical systems with which they were compared.

This point deserves careful reading. It does not mean that all TPS are “the same”. It means that, within the validation scope used, RayStation CC achieved agreement compatible with clinical practice compared to already consolidated commercial systems.

This is very different from saying there is no difference between engines. There is. But the useful comparison must always be situated in:

- treatment technique;

- geometry;

- machine;

- type of heterogeneity;

- beam model used.

Scope of machine and technique: where IFU is more honest than propaganda

A virtue of IFU local is to make it clear that algorithm coverage is not the same thing as uniform validation coverage. RayStation describes in great detail the machines and techniques covered:

- Varian, Elekta and Siemens in various configurations;

- FFF in examples like Siemens Artiste and Varian Halcyon;

- validation with m3 MLC in Varian Novalis;

- validation from VMAT standard for Varian, Elekta and Vero;

- specific validation for wave arcs in Vero and OXRAY;

- validation from CyberKnife with fixed cones, iris and MLC;

- TomoTherapy support for the appropriate engine.

Important limitations also appear:

- VMAT in small fields is highly sensitive to MLC parameters of beam model;

- certain techniques should be treated as practically a new technique, requiring model validation and QA per patient;

- some machines or modalities have partial coverage or require additional caution.

This type of information should guide any serious use of the system. The correct question is never “does the engine support VMAT?”, but “which VMAT, on which machine, with which beam model, within which validation scope?”.

What about Monte Carlo from RayStation?

The IFU location is also clear when describing the Monte Carlo photon engine. The most interesting conceptual point is this: the photon Monte Carlo dose engine uses the same fluence computation in the LINAC head as the collapsed cone dose engine.

This sentence is decisive because it shows where the engines separate. The head and creep description are not necessarily the main divider. The big divider is how to calculate the deposition within the patient.

The Monte Carlo of RayStation was validated, according to the document, with:

- representative subset of the measurements used for the CC;

- different energies of 4 MV to 20 MV;

- different LINAC models;

- wedges, cones and blocks;

- homogeneous and heterogeneous geometries;

- IAEA test suite;

- AAPM TG105 high resolution with heterogeneous insets.

Furthermore, the text states that the calculation MC in patient was cross-checked with EGSnrc in varied geometries, materials such as water, lung, bone, aluminum and titanium, fields of 0.4 x 0.4 cm² to 40 x 40 cm² and even with and without magnetic field.

This is the point where RayStation shows a strong philosophy: it is not enough to say that there is Monte Carlo; it is necessary to show what he was confronted with and in what context.

Where the IFU itself imposes limits on enthusiasm for MC

Another merit of the documentation is that it does not treat Monte Carlo as universally available or universally validated for any machine. The local text is explicit:

- o Monte Carlo of photons does not support TomoTherapy support;

- has not been validated for Vero and Siemens LINACs;

- on certain platforms, it is up to the user to validate clinical use.

This type of phrase should be remembered more in discussions about “more advanced” engines. An algorithm can be physically stronger and still have more limited practical coverage in part of the technology park. For the service, this means one simple thing: there is no automatic replacement of CC with MC just because the second one looks more sophisticated.

There is always a previous question:

is this engine validated, in this version, for this machine and for this technique?

Without this question, the comparison between engines becomes an abstraction.

Where CC and MC coexist in an intelligent way

The coexistence of the two engines makes sense because they answer slightly different questions.

The CC tends to be the natural choice when:

- the clinical routine requires high speed;

- the geometry is within the territory well covered by collapsed cone;

- algorithms the service needs a robust engine for large volume planning;

- the expected difference for MC should not change behavior.

The MC tends to gain ground when:

- there are more aggressive heterogeneities;

- the case involves more challenging material;

- the field is too small;

- the residual uncertainty of the analytical engine may be clinically relevant;

- the service wants to refine the final calculation in more critical situations.

This division should not be read as a rigid rule, but as clinical reasoning. The common mistake is to transform MC into an automatic symbol of superiority, when the real problem is often in beam model, in the material mapping, in the grid or in the chosen technique.

IFU’s most important warning: dose convention compatibility

Perhaps the most clinically underrated part of the entire document is the warning about engine comparison.

The RayStation explicitly warns that doses computed with different engines should be combined or compared with caution when the dose convention differs and the plan is sensitive to high atomic number materials.

The local text states:

- o photon collapsed cone dose engine computes dose to water with transport in water of variable density;

- o photon Monte Carlo dose engine in RayStation v2025 reports dose to medium with transport in the middle;

- the difference is usually small in tissues other than bone;

- bone or high Zmaterials, the difference may be relatively large.

This observation is worth its weight in gold because it shows how coexistence between engines requires methodological maturity. It is not enough to compare two DVH curves if they do not represent exactly the same physical convention.

What the CC/MC pair teaches about TPS commercials

Perhaps the main editorial lesson here is this: RayStation makes it clear, in an almost pedagogical way, that modern radiotherapy is not just about “choosing the most sophisticated algorithm”. It depends on aligning:

- the type of case;

- the type of engine;

- o beam model;

- the delivery technique;

- the validation scope;

- the dose convention used.

By keeping CC and MC side by side, the system assumes a clinical truth that is sometimes hidden in simplistic speeches: different engines have different roles, and the maturity of a service appears precisely in the way it decides when each one should be used.

What does a mature service do with this coexistence

When a service has CC and MC in the same TPS, the best strategy is not to elect a universal winner. The best strategy is to establish an explicit usage policy. Something like:

- in which places CC is the routine engine;

- in which scenarios MC is used as a final refinement;

- in which cases the comparison between the two is mandatory;

- how to interpret discrepancies in bone, implants and low-density heterogeneities;

- how to record the dose convention for each distribution.

Without this policy, the coexistence of engines becomes apparent freedom and practical confusion. With this policy, the institution transforms algorithmic variety into real clinical capacity.

This is the main point of RayStation as a commercial example. The value of the system is not in offering two engines for the user to choose an ideological champion. The value is in offering two physically different answers, each with its own force field, its cost and its validation limit.

Mature service does not use this coexistence to produce showcase comparisons. Use it to make better decisions.