Why Monte Carlo Is Essential for Photon Radiotherapy

Dose calculation accuracy of ±2% is a non-negotiable requirement in modern radiotherapy. ICRU Report 50 mandates that the tumor dose remain within -5% to +7% of the prescribed dose, and detailed uncertainty analyses (ICRU Report 24, Brahme 1984, Dutreix 1984) show that 3% accuracy in dose calculation is needed to achieve ±5% in delivered dose. For certain tumors, this margin tightens to 3.5% — meaning the dose calculation algorithm itself must be accurate within ±2%.

The Monte Carlo (MC) method starts from first principles and tracks individual particle histories, including secondary particle transport. In practice, MC produces accurate results in regions of tissue heterogeneities — lung, bone-tissue interfaces, surface irregularities — making it the most accurate algorithm for complex techniques such as IMRT, VMAT, and stereotactic radiotherapy. With the commercial introduction of MR-LINACs, MC has become not just preferable but mandatory: the magnetic field’s influence on dose distribution makes Monte Carlo the only viable calculation method (Hissoiny et al., 2011; Ghila et al., 2017; Kubota et al., 2020).

Despite these advances, MC accuracy depends heavily on implementation quality and input data. The patient’s anatomical information affects both irradiation geometry and interaction cross sections, and the lack of a general, accurate, user-scalable source model remains the primary barrier to widespread adoption. For a comprehensive overview, see our complete guide to Monte Carlo in Radiotherapy.

Requirements for a Clinical Photon MCTP System

A Monte Carlo treatment planning (MCTP) system goes far beyond a dose calculation algorithm coupled with a beam model. The system must provide beam setup capability, dose display, and dosimetric evaluation tools. Commercial TPS already offer these for conventional algorithms, but research packages typically lack them. For large-scale MCTP use, automation becomes essential — teams have addressed this by interfacing external MC with commercial TPS via DICOM-RT (Alexander et al., 2007; Rodriguez et al., 2013) or automatic interfaces (Fix et al., 2007; Siebers et al., 2000).

Beam Model: From Measurement to Full Simulation

Accurate characterization of the radiation beam exiting the treatment head is an absolute prerequisite for accurate patient dose calculations. Beam models for photon MCTP fall into two main categories: measurement-based and MC-based. Measurement-based models use analytical functions with parameters fitted to experimental data (Ahnesjo et al., 1992; Fippel et al., 2003; Faught et al., 2017). MC-based models can use full treatment head simulation, phase space (phsp) files, histogram-based models, or hybrid approaches.

Each approach involves clear trade-offs. Phsp files are detailed but require significant storage. Histogram models are compact and fast but may lose variable correlations. Direct in-memory particle passing (without intermediate phsp) is faster and eliminates large file storage, but particle reuse introduces correlations affecting statistical uncertainty — a lower limit given by the latent variance of the phsp (Sempau et al., 2001).

Beyond the primary beam (patient-independent part), the model must accurately represent patient-specific beam modifiers: blocks, hard wedges, dynamic wedges, multileaf collimators (MLCs), and other accessories. Errors in the beam model propagate through all subsequent dose calculation processes — extensive verification during commissioning is essential.

For beam modelling details, read our dedicated article on Monte Carlo modelling of external photon beams.

Patient Model and CT Conversion

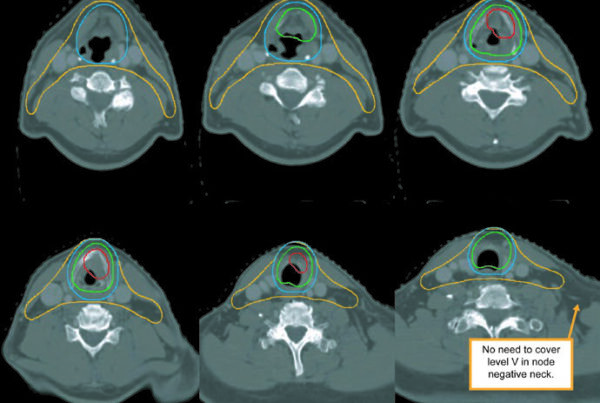

The patient’s anatomical representation directly determines dosimetric accuracy. MC algorithms require interaction data (cross sections) derived from tissue composition, not just electron density. Converting Hounsfield values to material composition involves segmentation into bins — more bins mean more accurate representation (Vanderstraeten et al., 2007). A default conversion with few materials is inadequate when high accuracy is required.

Image artifacts, grid resampling, and differences between CT and treatment couches introduce additional errors. Volken et al. (2008) demonstrated that integral conservative Hermitian curve interpolation significantly improves accuracy over linear or cubic interpolation. Dual-energy CT scanners have potential to improve tissue identification (Bazalova et al., 2008; Lalonde and Bouchard, 2016), though the benefit is more pronounced for protons and kV radiotherapy than for MV photons (van Elmpt et al., 2016). Calibration phantoms must be carefully evaluated — Teflon is not an appropriate representation for cortical bone (Verhaegen and Devic, 2005).

Dose Calculation and Evaluation

A unique advantage of MC is its capability to calculate dose for dynamic situations — patient motion, IMRT, VMAT — continuously, without approximating gantry rotation by multiple static fields. MC can also provide time-resolved dose rate distributions (Mackeprang et al., 2016; Podesta et al., 2016). Non-coplanar techniques with dynamic collimator and couch rotations (Fix et al., 2018; Manser et al., 2019; Smyth et al., 2019) further expand the accessible degrees of freedom.

MC calculation time does not scale linearly with beam count when only target statistical uncertainty is considered. However, it may increase if acceptable uncertainty is required in OARs, which receive lower particle fluence. Dose evaluation with MC demands special attention to statistical uncertainty — information generally absent from commercial display tools. Whether dose-to-medium or dose-to-water was calculated must be documented regardless of the algorithm used.

Commissioning and Validation of Photon Monte Carlo

Tolerances and Acceptance Criteria

Defining tolerance criteria before commissioning is fundamental. Several metrics are used for dose distribution comparison: dose difference, distance to agreement (DTA), gamma index (Low et al., 1998), and variants (Bakai et al., 2003; Sumida et al., 2015). The standard is typically 2%/2 mm, but if these criteria apply to patient dose calculation, beam model error estimates must not exceed 1%/1 mm (Keall et al., 2003).

Applying uniform criteria across all situations is questionable. Build-up regions, outside the direct field, and different field sizes may justify different tolerances. Given MC’s statistical nature, there is always a probability of dose values with large random errors that fail the criteria. Criteria should be chosen in relation to the planned clinical use of the machine.

Validation: Measurements and Comparisons

The validation process typically includes the following comparisons:

| Measurement Type | Description | Notes |

|---|---|---|

| Depth dose curves | Relative and absolute, open and modified fields | In water or equivalent phantoms; various field sizes including off-axis |

| Lateral profiles | Open, wedged, MLC-shaped, IMRT and VMAT fields | Voxel size compatible with detector sensitive volume |

| Output factors | Absolute dose calibration (cGy/MU) | Multi-point method preferred over single-point (Siebers et al., 1999; Fix et al., 2007) |

| Heterogeneous phantoms | Water-bone-water or equivalents | Validates transport in non-water materials and CT conversion |

| MLC transmission | Transmission profiles and interleaf leakage | Up to 10% DVH differences if MLC is inaccurately modelled (Reynaert et al., 2005) |

| Dynamic wedges (EDW) | Dose curves and profiles at various wedge angles | Validation with ionization chamber at multiple points |

| IMRT/VMAT verification | MC vs measurement for individual fields | Film + ionization chamber in solid phantom |

| Clinical plans | MC vs conventional algorithm comparison | Simple and complex cases, water and CT-based patient models |

Source: Monte Carlo Techniques in Radiation Therapy (2nd ed., CRC Press, 2022)

Comparisons at shallow depths are especially sensitive to beam model parameters. In-air measurements help evaluate the model with reduced scatter influence. Measurement quality is crucial — energy response dependencies, effective point of measurement, and dose rate must all be considered. Different detectors can produce penumbra width differences of up to a factor of 2 (Sahoo et al., 2008).

MLC validation deserves particular care. Without interleaf leakage modelling, average transmission may be correct but the transmission profile shape cannot be reproduced. For enhanced dynamic wedges (EDWs), where one jaw travels during irradiation, ionization chamber measurements at points along dose curves are essential. For dynamic MLC applications (IMRT), validation requires individual fields delivered at gantry zero on a water phantom, comparing point measurements and films with MC calculations.

MCTP Systems: Research and Commercial

Numerous research institutions developed MCTP systems over the past decades. The table summarizes the main research systems:

| System | Institution | MC Code | Beam Model |

|---|---|---|---|

| RTMCNP | UCLA | MCNP4A | User-friendly MCNP interface |

| EGS4-MCTP | Memorial Sloan Kettering | EGS4 | Dual-source (primary + scatter) |

| MCDOSE | Stanford / Fox Chase | EGS4 | phsp or multiple source models |

| VCU MCTP | Virginia Commonwealth | EGSnrc | Dedicated MLC transport method |

| RT_DPM | Univ. of Michigan | DPM (BEAMnrc phsp) | Dose Planning Method |

| XVMC-based | Univ. of Tübingen | XVMC | Virtual fluence model + MC optimization |

| MMCTP | McGill University | BEAMnrc + XVMC | DICOM-RT, contouring, visualization |

| SMCP | Inselspital / Univ. of Bern | EGSnrc or VMC++ | Registered in Eclipse (Varian); supports protons, MERT, dynamic trajectory |

| PRIMO | UPC / Essen | PENELOPE / DPM | GUI + DICOM-RT import + dose evaluation |

| CARMEN | Univ. of Seville | EGSnrc | MATLAB, mixed electron-photon inverse optimization |

Source: Monte Carlo Techniques in Radiation Therapy (2nd ed., CRC Press, 2022)

On the commercial side, the main systems are:

| System | Vendor | MC Code | Key Features |

|---|---|---|---|

| Peregrine | NOMOS / Corvus | Custom | 4-source model with correlated histograms (discontinued) |

| Monaco | Elekta (CMS) | XVMC | Virtual fluence model with 11 parameters; transmission filters for MLC (~100x faster than full MC) |

| iPlan MC | Brainlab | Custom | 93 in-air + 97 in-water measurements; speed-optimized vs accuracy-optimized MLC option |

| ISOgray | DOSIsoft | PENELOPE / PENFAST | Selective particle tracking with skin/nonskin areas; Elekta, Siemens, Varian LINACs |

| Precision MC | Accuray | Custom | CyberKnife with fixed, Iris, and MLC collimators; single-source target model |

| RayStation MC | RaySearch | GPU in-house | Class II condensed history; Woodcock tracking; 11s for dual-arc prostate (3 mm³, GTX 1080Ti, 1% uncertainty) |

Source: Monte Carlo Techniques in Radiation Therapy (2nd ed., CRC Press, 2022)

RayStation MC deserves special mention as it represents the current trend: GPU implementation with Woodcock tracking, commissioning using the same measurements as the collapsed cone algorithm (but with separate model parameters), and online statistical uncertainty determined by batches. The stopping criterion is the mean uncertainty across voxels above 50% of maximum dose. This speed makes MC clinically practical for daily routine.

Clinical Applications: Noise, Timing, and Lung Impact

Dose Distribution Noise and Reliable Metrics

Unlike deterministic algorithms, MC produces dose distributions with statistical uncertainty. This affects isodose lines, DVHs, dose indices, and cost function convergence. Uncertainty is determined by the history-by-history method (Walters et al., 2002), and quadrupling the number of histories is required to halve the uncertainty.

In practice, 2% uncertainty per beam yields reasonable precision within the PTV when three or more beams are used. But point values such as $D_{max}$ and $D_{min}$ are highly sensitive — a new simulation with a different random seed may yield different results. Volume-based quantities like $D_{median}$ or $D_{mean}$ are far more reliable metrics for prescription and evaluation with MC. For OARs, uncertainty can be substantially higher than in the target, requiring more histories if precise NTCP evaluations are needed.

Denoising techniques — including deep learning approaches (Javaid et al., 2019) — show promise but must preserve true dose gradients. In clinical practice, denoising can assist the iterative planning process, but final dose calculations should achieve adequate uncertainty without relying on denoising.

Calculation Time and GPU Optimization

Calculation time depends on the desired uncertainty, voxel size, scoring volume, beam modifiers, and hardware. The AAPM Task Group 105 (Chetty et al., 2007) compiled timing benchmarks, and processor speeds have continued to increase rapidly since then. GPU implementations (Badal and Badano, 2009; Jia et al., 2011; Tian et al., 2015) demonstrated substantial efficiency gains.

Transport optimizations — such as simplifying transport through secondary jaws when the MLC is used below them (Schmidhalter et al., 2010) — also reduce calculation time. Inverse planning with MC in the cost function remains the one scenario where overall calculation time may become unacceptable given the large number of iterations required.

Lung: Where MC Makes the Greatest Difference

Lung cases show the largest discrepancies between MC and conventional algorithms. Electron transport modelling in low densities is the Achilles’ heel of convolution algorithms. Fogliata et al. (2007) demonstrated errors of up to 30% with pencil beam in simple lung inhomogeneities; for more advanced algorithms, approximately 8%. Grid-based Boltzmann equation solvers (like Acuros) further reduce this gap (Bush et al., 2011; Fogliata et al., 2011).

Wang et al. (2002a) found differences exceeding 10% between MC and algorithms using equivalent path length correction. A clinically relevant finding: 6 MV photons are preferable to 15 MV in lung because the shorter lateral electron range at lower energies better preserves target coverage (Wang et al., 2002b; Madani et al., 2007). The smaller the fields and higher the energies, the larger the discrepancies with conventional algorithms.

See our article on Dynamic Beam Delivery and 4D Monte Carlo for how MC handles IMRT and VMAT in dynamic scenarios, and the patient dose calculation article for strategies in heterogeneous media.

Monte Carlo as a QA Tool

Beyond treatment planning, MC serves as an independent quality assurance tool. The ability to recalculate dose distributions from first principles makes MC a robust check for plans calculated with other algorithms — particularly valuable for monitor unit verification in IMRT and for retrospective re-evaluation of clinical studies with more accurate algorithms.

Comparing MC dose distributions with those from other algorithms helps identify situations where a given TPS’s accuracy is insufficient. For deeper theoretical foundations, consult our article on Monte Carlo fundamentals in radiotherapy.

Monte Carlo in Clinical Photon Beam Routine

Monte Carlo is no longer a research-only tool. GPU implementations deliver calculations in seconds, structured commissioning is supported by vendors, and validation against experimental measurements is well established. For MR-LINACs, MC is indispensable — no other method adequately handles the magnetic field.

The main remaining barrier is the source model: each user must commission their accelerator so that MC meets accuracy requirements (typically 2% or 2 mm). Volume-based quantities like $D_{mean}$ are preferable to point values for dose prescription. And for lung cases, MC’s clinical impact is unequivocal — ignoring real particle transport compromises treatment quality.

To explore other aspects of Monte Carlo in radiotherapy, browse the complete series from our comprehensive Monte Carlo in Radiotherapy guide.