When someone describes collapsed cone as just “an intermediate algorithm between pencil beam and Monte Carlo”, they are almost always erasing the very thing that made this method last. The CCC did not survive out of habit. It survived because it solved a real clinical problem: taking three-dimensional scattering and heterogeneity seriously without throwing calculation time out of the routine.

This is why it continues to appear in current discussions, including within TPS modern commercials. The central idea remains strong: calculate the energy released locally, redistribute it on a consistent physical basis and do this quickly enough so that the physicist can plan, review and optimize without turning each case into a computational experiment.

It’s worth looking at the method this way because collapsed cone is not just a historical stage. It is one of the most influential responses ever given to the ongoing tension between physical fidelity and operational viability.

In this Article

- 1. The problem that CCC came to solve

- 2. What Ahnesjö’s idea does

- 3. The role of TERMA

- 4. The role of Mackie’s kernels

- 5. The analytical form of

- 6. What the handbook helps you see

- 7. Why the computational gain was so decisive

- 8. Why was this so important in lung

- 9. From literature to commercial TPS

- 10. The link to RayStation

- 11. What the CCC continues to teach even in the era of Acuros and MC

- 12. Cho’s article and practical implementation

- 13. What collapsed cone isn’t

- 14. Where it continues to make sense

The problem that CCC came to solve

The convolution/superposition methods already represented an important advance over the more simplified pencil beamalgorithms. The fundamental idea was clear: the dose at a point can be built up from the energy released at neighboring points and from a kernel that describes how this energy spreads and deposits.

In compact form:

Where:

- T(r’) represents a quantity linked to locally released energy, often associated with TERMA;

- K represents the energy deposition kernel.

The problem is that, in three dimensions and in heterogeneous media, complete superposition can become very costly. It is necessary to consider many possible contributions for each voxel, and the number of operations grows quickly.

It was exactly this bottleneck that motivated the classic work of Ahnesjö in 1989.

What Ahnesjö’s idea does

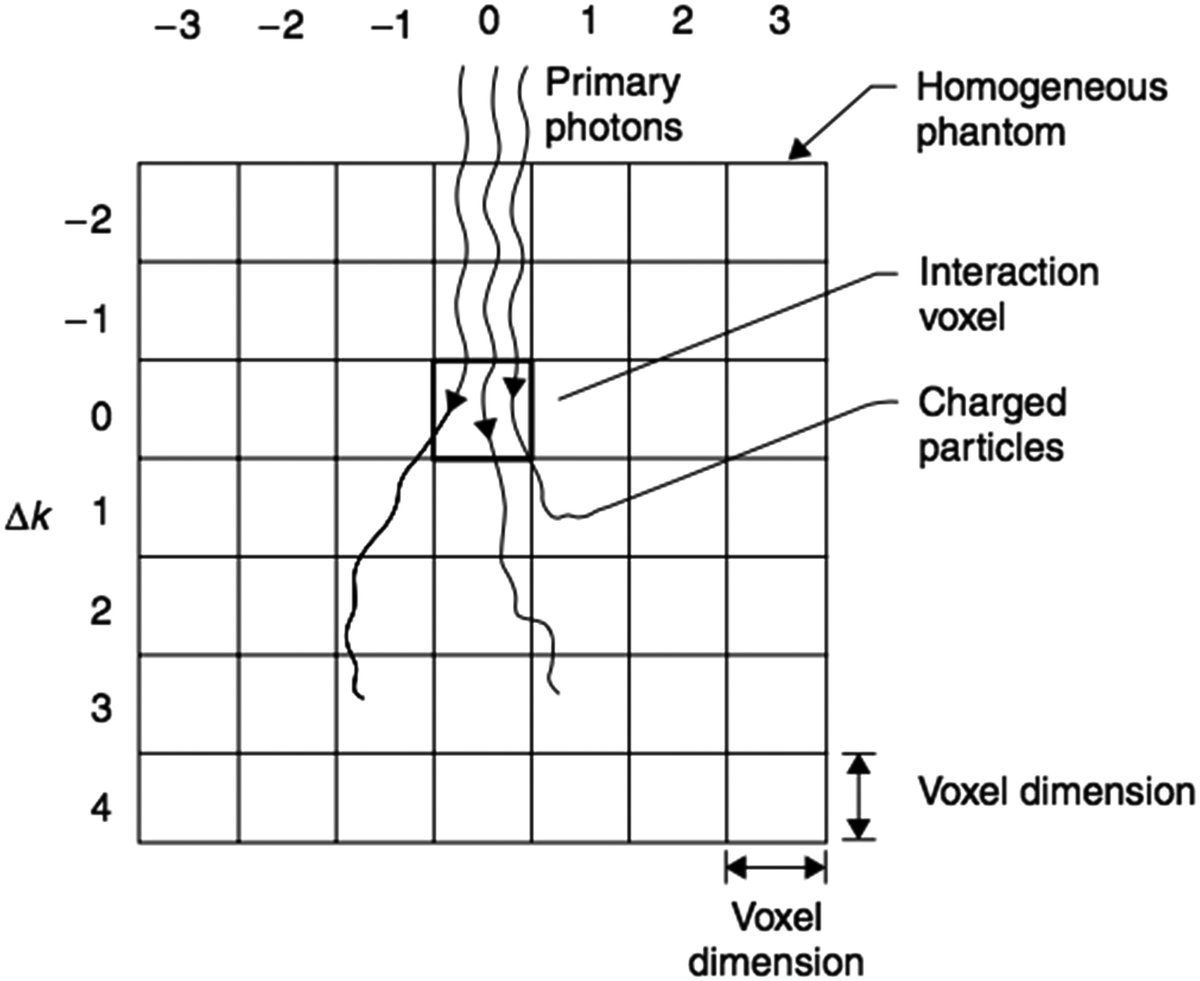

According to the local note on Ahnesjö‘s article, the collapsed cone convolution method is part of the calculation of TERMA by ray tracing and uses poly-energetic kernels derived from a database of mono-energetic kernels. The big thing is how the energy is transported:

instead of treating the full angular contribution of the kernel in all its three-dimensional granularity, the method groups the energy into cones of equal solid angle and collapses this energy along the center lines of these cones.

This formulation greatly reduces the number of operations and also allows heterogeneities to be incorporated in a much more respectable way than simpler methods.

In editorial terms, the idea can be summarized as follows:

It is not the original formal equation of the paper, but it is a useful way of showing the reader the essential mechanism: energy is redistributed in discrete directions chosen so as to preserve the relevant physical content without paying the full cost of total superposition.

The role of TERMA

To understand CCC, it is necessary to talk about TERMA without turning the text into a dry lesson.

TERMA is the total energy released per unit mass from the interactions of primary photons in the medium. In many convolution/superposition schemes, it functions as the “local source” of energy that will then be spread throughout the kernel.

In the local synthesis of the article Ahnesjö, TERMA is calculated by ray tracing the beam through the patient, while energy redistribution uses deposition kernels derived from a Monte Carlo basis. This coupling already shows the strength of the method:

- the attenuation and energy released are treated locally;

- spreading is handled with physically grounded kernels;

- computation is made viable by collapsing into cones.

The role of Mackie’s kernels

The story of collapsed cone is not complete without the work of Mackie et al. on energy deposition kernels generated with EGS. The local note summarizes well why this article matters: it provides the physical basis for methods that calculate dose from the convolution of fluence with kernels that describe the energy transport and deposition of secondary particles.

In other words, Mackie helps build the physical raw material; Ahnesjö helps make the clinical use of this raw material computationally viable.

This combination explains why CCC has become so influential. He doesn’t invent the idea of a deposition kernel, but he does invent an extremely efficient way of using this idea in a real planning context.

The analytical form of

kernels In the local note of Ahnesjö, the poly-energetic kernels are described by an analytical expression of the type:

The coefficients depend on energy and angle, and the presence of exponential terms over r² helps capture scattering and attenuation behavior.

This analytical representation is important for two reasons.

First, because it facilitates recursive calculation along the chosen discrete directions.

Second, because it shows that CCC is not just a geometric sampling trick. It depends on a mathematical description of the kernel that preserves the physics of the problem sufficiently well.

What the handbook helps you see

The handbook-part-f-dose-calculation summarizes the computational motivation of the method very well. The text notes that, instead of systematically accounting for all contributions from all voxels in all directions, collapsed cone preferentially samples relevant neighborhoods along a finite set of lines or “pipes”. Then, the energy emitted by neighboring voxels is collapsed into these average directions, preserving total transport.

This is where the name really makes sense. The algorithm works with transport cones, but collapses the energy in these average axes to gain speed without abandoning the idea of three-dimensional scattering.

The same text highlights something very important for clinical practice: in contrast to superposition methods that assume local energy deposition and ignore the transport of secondary electrons, implementations derived from CCC are able to represent with reasonable accuracy both the penumbra widening and the dose reduction in the axis of high-energy beams that cross the lung.

This sentence sums up the place of the method in history. CCC was an efficient way to bring back to the clinic a part of physics that simpler algorithms were missing.

Why the computational gain was so decisive

Today it is easy to underestimate the impact of this type of optimization because the available hardware is much better. But the story of CCC only makes sense when you remember the computational problem it faced. Full three-dimensional superposition on heterogeneous media is expensive. THE collapsed cone reduced this cost precisely because it exchanged much more extensive integration for physically controlled angular sampling.

The handbook summarizes this gain very clearly by explaining that, instead of systematically considering all voxels that theoretically contribute to the dose at each point, the method starts to calculate contributions along a limited number of directions. The energy is then normalized and collapsed into these average axes.

In clinical language, this means:

- less cost;

- maintenance of relevant physics;

- feasibility of routine use in TPS.

Without this balance, the method would have been just an elegant paper idea. With it, it became a pillar of clinical software.

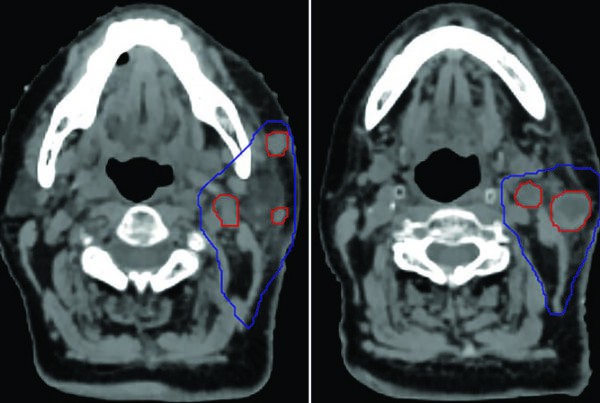

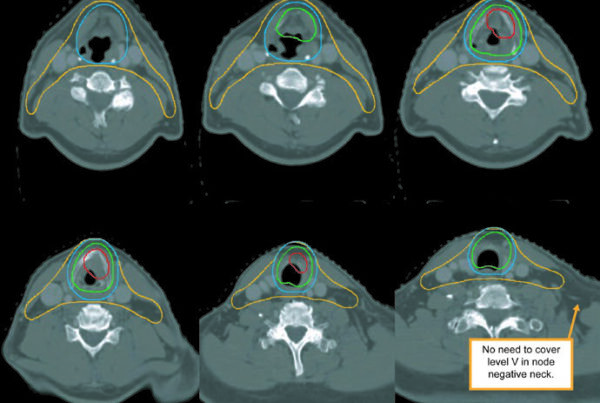

Why was this so important in lung

Lung has always been a very demanding test for dose algorithms. Low density alters the transport of charged particles, widens penumbra, and changes how the dose reorganizes within and after heterogeneity.

The handbook precisely highlights that CCC implementations can reasonably capture:

- the widening of the penumbra at low density;

- dose reduction along the beam axis within the lung.

This is a crucial difference from many simpler pencil beam algorithms, which tend to treat heterogeneity primarily as depth correction.

This is why CCC has remained so central to business practice. It offers a real physical improvement in one of the most sensitive clinical settings, but without the full cost of Monte Carlo.

From literature to commercial TPS

handbook himself mentions that CCC methods and derivatives have been successfully implemented in several clinical TPS, including platforms that have evolved over time into well-known commercial solutions. The text also explicitly mentions RayStation among the most recent examples.

This continuity is revealing. An algorithm only survives so long in a clinical environment when it delivers three things at the same time:

- sufficiently strong physical base;

- stable behavior in a wide range of cases;

- computational cost compatible with the routine.

The CCC fulfills exactly this role. That’s why it continues to appear in modern systems, even alongside heavier methods.

The link to RayStation

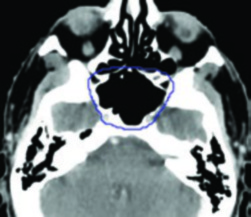

In the case of RayStation, the official white paper describes the clinical photon engine as based on the principles of collapsed cone convolution superposition. The summarized sequence includes:

- multi-source model for fluency;

- divergent ray tracing;

- kernels EGSnrc for spreading;

- separate calculation of electronic contamination.

This summary is important because it shows how the CCC tradition has been incorporated into modern commercial architecture. The historical method does not appear in isolation; it is combined with:

- richer head shaping;

- description of MLC, leaf tips and tongue-and-groove;

- integration with optimization flow and contemporary planning.

In other words, the modern clinical CCC is not just a copy of the classic paper. It is the industrially refined continuation of a central idea.

What the CCC continues to teach even in the era of Acuros and MC

Even with the growing presence of deterministic transport and Monte Carlo, the official CCC continues to teach an important lesson: in radiotherapy, a good solution is not always the most complete from an absolute physical point of view. Sometimes the best solution is the one that captures the dominant phenomenon with sufficient fidelity and cost-effectiveness.

This is exactly why the method has aged so well. It holds up because it answers a useful clinical question:

how much physics can I bring to calculus without losing routine?

This question is still current. And collapsed cone remains one of the most convincing answers ever given to it.

Cho’s article and practical implementation

The local note on Cho et al. (2012) is useful because it shows an intermediate layer between Ahnesjö ‘s historical article and the mature commercial TPS. The work describes the practical implementation of an CCC algorithm in a planning system, using:

- three-source model for photon fluence;

- calculation of TERMA with poly-energetic spectrum, attenuation, horn effect, hardening and transmission;

- poly-energetic kernel approximated by dozens of lines of collapsed cones.

This article is editorially valuable because it shows that CCC was not stuck with the conceptual plan. It became a practical implementation language in TPS.

What collapsed cone isn’t

Part of CCC ‘s longevity comes from the fact that it is often poorly summarized. That’s why it’s worth saying clearly what it is not.

It is not:

- a lightly corrected pencil beam ;

- a lightly corrected Monte Carlo disguised;

- an accurate transportation solution;

- an old method preserved by inertia.

It is:

- a convolution/superposition method with a strong physical basis;

- a computationally efficient solution for heterogeneous media;

- an algorithm particularly good at representing scatter and penumbra at a viable clinical cost.

Where it continues to make sense

The CCC remains very strong when the service needs:

- fast clinical calculation;

- good response in relevant heterogeneities;

- robustness across a wide range of techniques and machines;

- predictable platform for routine.

It tends to be less convincing when the clinical question requires an even more explicit treatment of physics in extreme scenarios, especially when the service has well-validated Monte Carlo and the additional cost makes sense.

But this observation needs to be read carefully. In many scenarios, the difference between CCC and heavier methods does not change clinical management. In others, it changes. The merit of CCC is precisely in having expanded, by decades, the range of cases in which an analytical engine can still deliver sufficient physics.

This is where collapsed cone continues to deserve space in any serious discussion about trading algorithms. With Mackie, the area gained deposition kernels derived from Monte Carlo. With Ahnesjö, we gained an ingenious way of transporting this energy in heterogeneous media without making the calculation impractical. With subsequent implementations, such as those described by Cho and by manufacturers, this line evolved into a routine clinical engine.

If today it still makes sense to study it in detail, it is not because of nostalgia. It’s because he goes on to explain, better than much modern discourse, how to make relevant physics fit into clinical time.