In nearly homogeneous water, many commercial algorithms appear more similar than they really are. The difference appears when the beam crosses the lung, re-enters an air cavity, passes through dense bone or touches metal. This is where simplified physics starts to take its toll.

Therefore, the comparison that matters is not “which TPS seems more sophisticated”, but what happens when the dose needs to cross:

- low-density lung;

- air-tissue interfaces;

- cortical bone;

- high-quality implants and materials Z;

- small modulated fields in irregular geometries.

This article organizes the problem by clinical scenario. The useful question is not who wins an abstract contest between Pencil Beam, AAA, collapsed cone, Acuros XB and Monte Carlo. The useful question is another: which hypothesis of each family begins to break when the medium stops looking like almost homogeneous water.

In this Article

- 1. Lung: the great test of algorithms’ honesty

- 2. Air-tissue interfaces: where lateral physics weighs heavily

- 3. Heterogeneity is not just a difference in maximum dose

- 4. Bone: where the discussion stops being just heterogeneity and becomes material

- 5. Metal and high-Z materials: when the problem becomes even less “water-like”

- 6. Poor imaging can mask or amplify algorithmic differences

- 7. Small fields: when simplification takes its toll

- 8. What each family tends to see best

- 9. The classic error: blaming the algorithm when the problem is something else

- 10. How to read heterogeneity more maturely

- 11. What changes when heterogeneity meets modulation

Lung: the great test of algorithms’ honesty

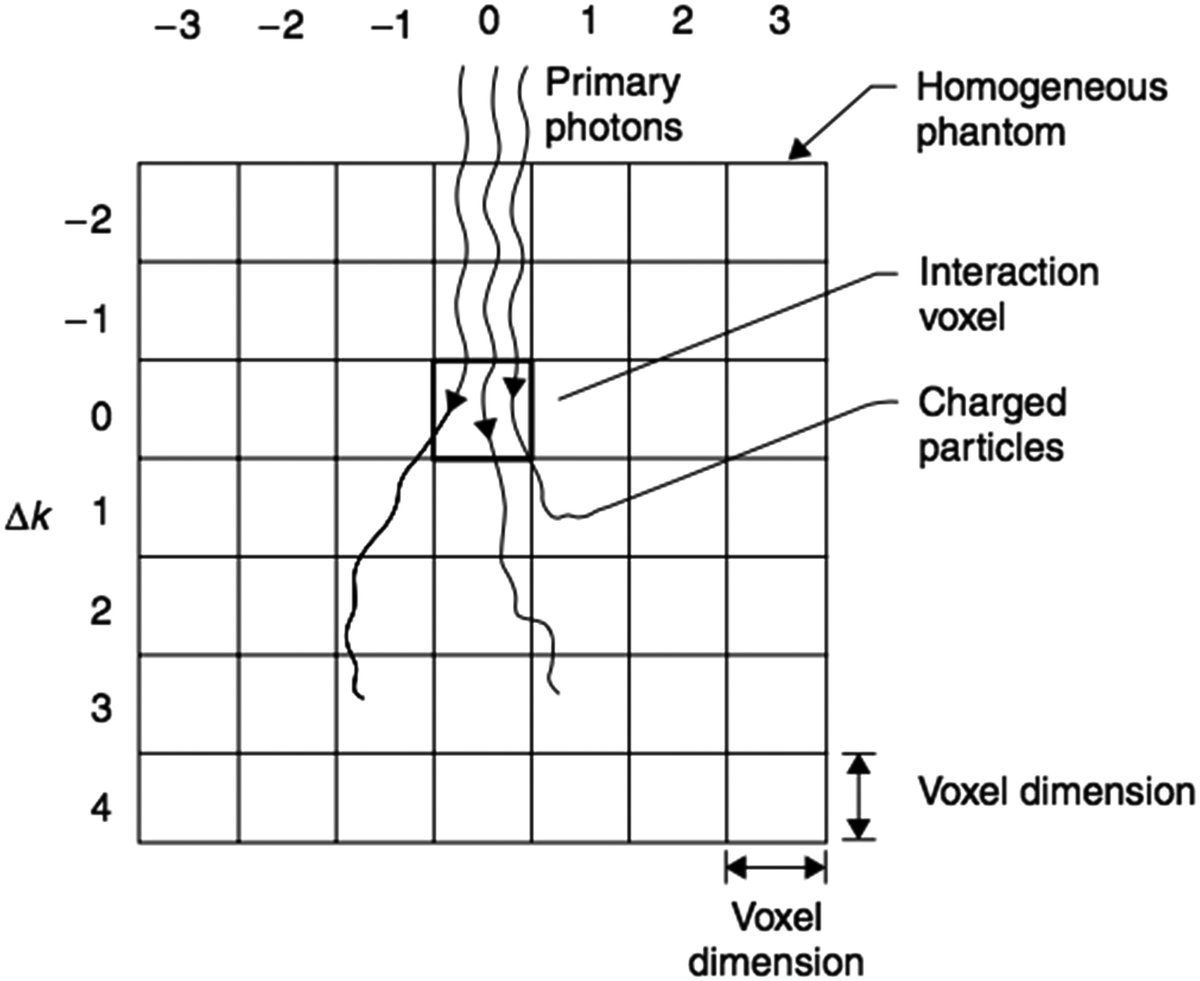

The lung remains one of the best stresses for any dose engine. Low density alters the transport of charged particles, increases penumbra, changes electronic balance and makes reentry into soft tissue especially sensitive to the model used.

Pencil Beam

Classical Pencil Beam suffers here because its strength lies in corrections along the beam’s main axis, not in robust lateral treatment of heterogeneity. In lung terms, this difference takes a quick toll:

- under or overestimation depending on the case;

- difficulty in adequately representing the lateral enlargement;

- greater fragility in small fields.

The underlying problem is that the algorithm tries to solve a strongly three-dimensional phenomenon with simplified lateral physics.

AAA

Classical AAA greatly improves the picture because it incorporates:

- beamlets;

- kernels derived from Monte Carlo;

- radiological depth;

- anisotropic scattering treatment.

But the Varian manual itself admits clear lung limitations. For 4–6 MV and fields ≥ 5×5 cm², the algorithm tends to underestimate dose in the lung and overestimate dose in tissue equivalent to water after the lung. At higher energies (10–20 MV) and fields ≤ 5×5 cm², it tends to overestimate the lung dose, with the error increasing as the field and lung density decrease.

The text of the Handbook of Radiotherapy Physics reinforces this reading by commenting on benchmarks in which AAA overestimated the pulmonary dose in small, high-energy beams.

Collapsed cone

collapsed cone methods were exactly an answer to part of this problem. The handbook highlights that implementations of the CCC family can, with good precision, represent:

- penumbra widening;

- dose reduction in the beam axis in the lung.

This already puts CCC family at a physically stronger level than the more classic Pencil Beam at low density.

Acuros XB and Monte Carlo

In lung, this is where the difference in algorithmic family becomes clearer. The handbook cites comparisons between EGSnrc, Acuros XB and AAA in phantom with low density heterogeneity, showing good agreement between Acuros XB and EGSnrc in an extreme situation, while AAA fails to adequately reproduce the dose within and below the heterogeneity.

In other words: lung is a scenario in which engines based on explicit transport or Monte Carlo usually show their gain more clearly.

Air-tissue interfaces: where lateral physics weighs heavily

Air cavities, paranasal sinuses, airways and other air-tissue interfaces expose the limitation of algorithms that treat heterogeneity mainly as longitudinal correction.

What Pencil Beam tends to do badly

Air-to-fabric interfaces challenge Pencil Beam because of loss and recovery of electronic balance. When the algorithm does not represent the lateral transport of secondary particles with good fidelity, the dose at the interface and immediately after it tends to be less reliable.

Where AAA improves and where it still suffers

Classical AAA improves significantly compared to Pencil Beam because it already handles multiple lateral directions via anisotropic kernels. But it still remains in the family of scaled kernels. This means that the interface description remains mediated by an approximation, not by explicit transport.

Where Acuros and MC gain ground

Air-fabric interfaces are one of the scenarios in which the formulation of the Acuros XB and Monte Carlo stops being a theoretical advantage and becomes a practical advantage. When the clinical question depends on how the dose reorganizes locally after passing through the air, the explicit transport solution tends to be more convincing.

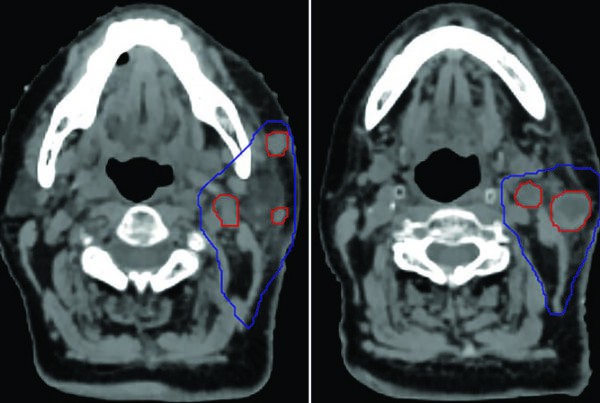

Heterogeneity is not just a difference in maximum dose

A common mistake when comparing algorithms in heterogeneous media is to look only at an aggregate number, such as maximum dose, average or even a simplified coverage reading. Many of the most relevant differences appear in another form:

- penumbra shift;

- change in distal gradient;

- dose reorganization at the interface;

- local change in small regions, even when global DVH changes little.

This is particularly important in lung and air cavities. Two algorithms may appear “similar” in global metrics and yet disagree exactly in the region where clinical risk is concentrated.

Bone: where the discussion stops being just heterogeneity and becomes material

If the lung is the classic test of low density, the bone is the classic test of the materiality of the problem. Here, it is no longer enough to treat the voxel as water with different density. The composition of the material begins to matter more visibly.

What this means for older algorithms

Algorithms that treat the medium primarily by water-equivalent scaling tend to capture part of the effect, but not all of the material difference.

What does this mean for Acuros XB

In Acuros XB, the CT to material mapping and mass density place the bone on another level of physical description. This is why, in the dose to medium versus dose to waterdebate, bone repeatedly appears as the biological material in which the difference is no longer small.

What does this mean for RayStation

Himself RayStation warns that, when comparing different engines, the discrepancy between dose conventions can be relatively large in bone, on the order of 10%. This data is important because it shows that part of the divergence between bone algorithms does not only come from the transport itself, but also from the way of reporting the dose.

Metal and high-Z materials: when the problem becomes even less “water-like”

Implants, prostheses and very dense materials put pressure on the dose calculation on several fronts:

- image artifact;

- need for override material;

- difference between actual composition and scaled water hypothesis;

- local impact of dose to medium convention vs dose to water.

Here, material-explicit algorithms gain importance, but they also require more discipline. The Varian manual highlights that Acuros XB may block the calculation or require user intervention when densities exceed the range covered by automatic material assignment.

This detail shows an important clinical truth: in high Zmaterials, more physical algorithms do not forgive poor case preparation. They are more powerful, but also more dependent on correct material representation.

Poor imaging can mask or amplify algorithmic differences

In metal and high Z, it is worth adding an important operational point: sometimes what appears to be a difference between algorithms is, in fact, a difference between the way each engine reacts to the same problematic image.

Artifact of CT, inadequate calibration curve and lack of override material can:

- distort density;

- distort presumed composition;

- shift the observed difference away from the actual physics of the case.

This is especially relevant when comparing material-explicit engines with more water-equivalent engines. Without properly treated imaging, the theoretical superiority of the more physical method may not appear cleanly.

Small fields: when simplification takes its toll

Small field is a short word for a long physical problem. When the field decreases, penumbra occupies a larger fraction of the distribution, the electronic balance becomes more fragile and any simplification in lateral modeling or in the head weighs more.

Pencil Beam

It is the family that suffers most, especially in heterogeneity.

AAA

It can remain very competent in various contexts, but the Eclipse manual highlights that relevant differences in the lung become more serious precisely when the fields are small and the energies high.

RayStation CC

Classical IFU local draws attention to another very important point: in small field rotational plans, the calculation of RayStation becomes highly sensitive to the parameters of MLC of beam model. This is valuable because it prevents a frequent error. Not every difference in a small field is “the fault of the dose motor”; some of it may come from the beam model and the MLC.

MC and more physical engines

Motors Monte Carlo or closer to explicit transport often gain traction here, but this does not eliminate the importance of beam model, statistics, grid resolution and commissioning.

What each family tends to see best

| Scenario | Families that tend to suffer most | Families that tend to respond best |

|---|---|---|

| Low density lung | Pencil Beam classic | Collapsed cone, Acuros XB, Monte Carlo |

| Soft tissue reentry after lung | Pencil Beam, AAA in certain scenarios | Acuros XB, Monte Carlo |

| Bone and dense interfaces | Strongly water-equivalent models | Acuros XB, Monte Carlo |

| Metal and high Z | Any algorithm without reliable material mapping | Well-configured material-explicit engines |

| Small heterogeneous fields | Pencil Beam, part of the simplified models | More physical engines, as long as they are well modeled |

This table needs to be read maturely. It does not say that an algorithm “always loses”. It tells where each family’s dominant hypothesis tends to come under the most pressure.

The classic error: blaming the algorithm when the problem is something else

There is a reading error that appears all the time in clinical comparisons: attributing every discrepancy to the name of the engine.

In heterogeneity, the observed difference can arise from several sources at the same time:

- physical family of the algorithm;

- grid resolution;

- material mapping;

- dose convention;

- beam model;

- MLC;

- modeling scope of machine and technical validation.

Himself RayStation insists on this by saying that certain techniques, such as VMAT sequencing, should be treated as practically a new technique, requiring validation of beam model and QA per patient. This completely changes the tone of the discussion. The dose algorithm matters a lot, but it never acts alone.

How to read heterogeneity more maturely

A more mature reading of the comparison between algorithms in heterogeneity includes at least four questions:

- which physical phenomenon dominates this case?

- which algorithm hypothesis is most pressed here?

- does the observed difference come from transport, dose convention or beam model?

- is this divergence clinically relevant or just methodologically visible?

Without these questions, the comparison becomes a ranking. With them, it becomes clinical physics.

What changes when heterogeneity meets modulation

There is a final point worth highlighting: heterogeneity rarely appears alone in modern practice. It appears together with:

- intense modulation;

- multiple fields;

- arcs;

- small fields;

- complex MLC edges.

When this happens, the problem stops being just “how the algorithm treats density” and becomes “how the algorithm + beam model + technique set describes a complex beam in a complex medium”. This is exactly why regulatory texts like RayStation insist so much on technique-specific validation and patient QA.

It is precisely in heterogeneity that names lose usefulness and physical hypotheses appear. Pencil Beam, AAA, collapsed cone, Acuros XB and Monte Carlo do not disagree because “is better” in the abstract. They differ because they simplify different parts of the same problem.

In the lung and air-tissue interfaces, the laterality of transport becomes the protagonist. In bone and high Z, the problem stops being just density and becomes material. In a small field, beam model, MLC and discretization start to matter as much as the engine name.

If the team reads heterogeneity this way, the comparison between algorithms becomes much more useful. It stops being a branded debate and becomes a concrete question about where that calculation still holds and where it is already outside the comfortable zone.