What Is Dynamic Beam Delivery and Why Monte Carlo Matters

Dynamic delivery techniques — IMRT, tomotherapy, and VMAT — are now routine in radiotherapy clinics worldwide. In these approaches, particle fluence is modulated by beam modifiers such as multileaf collimators (MLCs), whose positions vary continuously during irradiation. Some techniques also change the beam direction and energy simultaneously, as in arc therapy or scanned proton beams. Without Monte Carlo (MC) simulations, the influence of these beam modifiers on the spatial and energy distribution of incident particles is often approximated by the treatment planning system (TPS), which may not capture all dosimetric subtleties of the moving components.

Series overview: for the full roadmap and related articles, return to the complete guide on Monte Carlo in radiotherapy.

MC simulation provides the dosimetric accuracy needed to characterize these dynamic beam modifiers, verify TPS dose distributions, and perform independent monitor unit (MU) calculations. Its superiority in low-density tissue like lung — compared to the more standard analytical dose calculation algorithms — makes MC a natural choice for 4D radiotherapy applications. A properly validated dynamic MC model of the beam can serve as a commissioning tool to replace extensive, complicated measurements, especially when measurement resolution or accuracy is questionable. Accurate dose calculation methods are also required to reconstruct the patient dose delivery from log files recorded during treatment, a topic of growing clinical relevance.

This article dives deep into the simulation strategies for dynamic beam delivery, 4D patient geometries, interplay effects between beam and patient motion, and novel applications that push MC beyond what any commercial TPS currently offers. For a broader perspective on how the method works, see our article on Monte Carlo fundamentals.

Strategies for Simulating Time-Dependent Beam Geometries

Developing MC algorithms to model dynamic delivery requires an extremely accurate geometric blueprint — every component of the beam-modifying devices must be described in terms of geometry, atomic composition, and time-dependent positioning. The approaches for simulating dynamic geometries range, in order of increasing complexity, from modification of particle weights, to multiple static simulations of discrete geometrical states, to treating the beam motion as a probabilistic problem where the geometry is sampled on a particle-by-particle basis.

Particle Weighting

The simplest approach arose from techniques already used in analytical dose calculation algorithms. Weighting factors are determined from linear attenuation based on a ray tracing through the beam modifier geometry. Temporal variations of the beam modifier positions are accounted for by scaling the weighting factors by the fraction of the total delivery time that the modifier blocks the beam path. Ma et al. (2000) applied this technique to recalculate IMRT treatment plans from the CORVUS TPS, computing 2D intensity maps from leaf sequence files by accumulating MUs for unblocked areas and, for blocked areas, weighting by the average leaf leakage.

A critical limitation is that the non-uniformity of particle weights leads to greater statistical variance. To improve weight uniformity, particle splitting may be applied to particles with large weights, while Russian roulette can reduce the number of low-weight particles. However, these variance reduction techniques add complexity to the simulation code and require careful tuning. The approach also ignores the effect of the leaf tongue and groove as well as scatter contributions from the MLC leaves.

Static Component Simulation (SCS)

The SCS method runs multiple discrete simulations of individual geometrical states and is logical when geometry changes occur in discrete steps — for example, step-and-shoot IMRT. For a continuously variable geometry, a reasonable limit must be imposed on the number of geometry samples and thus the temporal resolution. An important practical consideration: simulating more geometries does not necessarily lengthen the total calculation time because the total number of histories to be simulated can be distributed between the individual geometries. The overhead comes from input file preparation, initialization, and post-processing steps. These individual simulations can be run in parallel, and results recombined later, or each simulation can be restarted from a previous one with an updated geometry (Shih et al., 2001).

The number of histories for each geometry can be calculated based on fractional MUs, meaning segments delivering fewer MUs receive fewer histories — at the cost of higher statistical variance in those segments. Alternatively, equal numbers of histories can be simulated for each geometry, and results weighted by fractional MUs during post-processing.

Position-Probability Sampling (PPS)

The PPS method, developed by Liu et al. (2001), is more naturally suited for continuously changing geometries. It requires cumulative probability distribution functions (CPDFs) for each geometrical parameter that varies. The probabilities are calculated from the fraction of total delivery time that each geometry element (MLC leaf, jaw) spends at a certain location. During initialization of each incident history, the geometry configuration is randomly sampled from this CPDF.

From an operational overhead perspective, PPS can be more efficient than SCS. Both approaches should produce the same statistical variance for an equal number of incident particles if the SCS method calculates the number of histories for each geometry based on the same CPDF used for PPS. Importantly, PPS is not limited to continuously changing geometries — by specifying the CPDF appropriately, a step-and-shoot delivery can also be modeled using this approach.

Dynamic Wedge Simulations

Dynamic or virtual wedges produce a wedge-shaped dose distribution by the dynamic motion of the collimating jaws. The jaw motion is specified as a function of fractional MUs in the segmented treatment table (STT). The spectrum of particles emerging from a virtual wedge may vary significantly from a static solid wedge, which differentially hardens the beam.

Verhaegen and Das (1999) performed the first reported MC simulation of a dynamic wedge using EGS4/BEAM on a Siemens linac. In their two-step approach, transport through the upper section of the treatment head is simulated first, scoring a phase space file before the upper jaws. Then 20 discrete simulations of transport through the jaws are performed between which one upper jaw moves in 1 cm steps. The resulting 20 phase space files are combined by taking a precalculated number of particles from each based on a formula for the ratio of MUs at each jaw position. They modeled both virtual and physical wedges from 15° to 60° for energies of 6–10 MV, obtaining good agreement with measurements except in the penumbra region of the toe end (discrepancies up to 4%). No major differences were found between physical and virtual wedges for beam hardening effects, except that 60° physical wedges produce significantly harder beams across the whole field due to higher tungsten absorption.

Shih et al. (2001) reproduced the Varian dynamic wedge delivery using the IRESTART feature in BEAM with updated jaw positions and incident histories for each STT entry. Verhaegen and Liu (2001) later developed the PPS method to simulate the Varian enhanced dynamic wedge (EDW) delivery, converting STT jaw positions to a CPDF. Ahmad et al. (2009) reported agreement within 2% and 1 mm compared to measurements of wedged profiles and output factors.

MLC-Based IMRT: Detailed Particle Transport

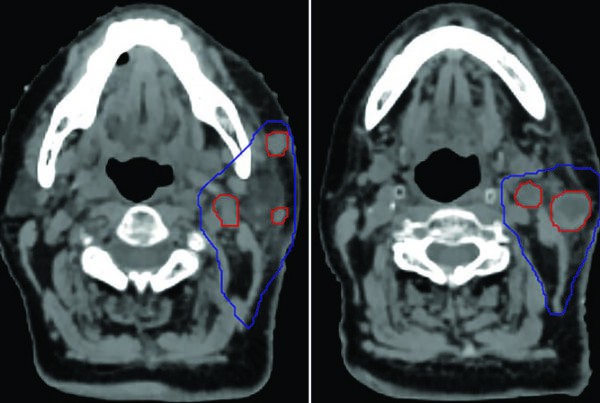

IMRT generates uniform dose distributions covering the target volume by applying non-uniform fluence patterns from multiple directions using continuous or discrete MLC aperture sequences. In step-and-shoot delivery, each subfield is set before the beam turns on. In sliding window (dynamic) delivery, the leaves move while the beam is on. The MLC leaf sequences — specifying leaf openings as a function of delivered MU — can come from the TPS or from post-delivery log files, which record machine state at fixed time intervals (e.g., 10 ms for Varian TrueBeam).

Deng et al. (2001) used a ray-tracing algorithm to calculate intensity maps that include the tongue-and-groove geometry of MLC leaves, demonstrating significant differences from simplified maps — a finding with direct implications for clinical dose accuracy. Keall et al. (2001) went further by including primary and scatter contributions through probability maps for leaf states (open, closed, or leaf tip passing through a grid point), with the scatter contribution obtained by integrating attenuation factors for successive leaf tip positions.

The fast MC code VCU-MLC (Siebers et al., 2002) takes an efficient approach: it uses a single-Compton approximation of photon interactions within the MLC, performs no electron transport, and reduces incident electron weights by the probability of striking an MLC leaf. The leaf geometry is approximated by segmenting each leaf into upper and lower halves with correct thickness to model tongue and groove. For each incident particle, the weight is modified by randomly sampling MLC positions 100 times from the leaf sequence file and averaging transmission probabilities. Compared to SCS and PPS methods, fewer source particles are required, resulting in a significant gain in calculation efficiency. The MLC model has also been extended to simulate photon transport through both jaws and MLC simultaneously with multiple Compton interactions (Seco et al., 2008).

Leal et al. (2003) and Seco et al. (2005) performed the first full linac-and-patient simulations for Siemens and Elekta linacs using BEAMnrc, where step-and-shoot delivery was modeled using the SCS method. Each beam consisted of 5–15 segments simulated individually, with histories proportional to segment MUs. Scripts were developed to automate generation and distribution of separate files for each segment phase space and the resulting dose distribution. For more detail on photon beam modeling, see our article on Monte Carlo modeling of external photon beams.

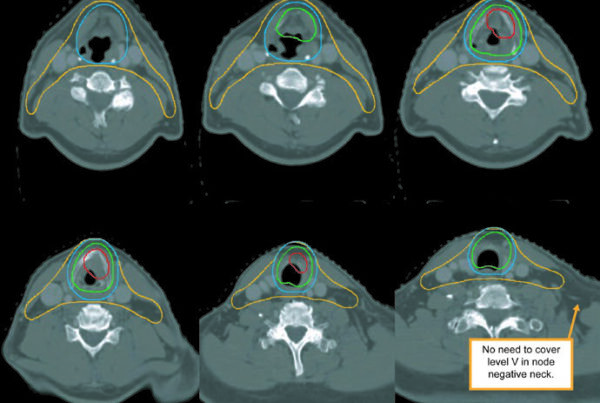

Tomotherapy: Helical Delivery Challenges

Simulating tomotherapy is particularly challenging because the gantry angle changes continuously during delivery. Helical tomotherapy uses a rotating fan beam source modulated by a binary MLC, with the delivery specified by a sinogram file containing fractional opening times for each leaf at discrete gantry positions. The amount by which the couch translates as a function of gantry rotation is specified by the pitch. Since the delivery is dynamic, leaf motion occurs while the source rotates, and subsampling of the sinogram may be needed to accurately capture the dosimetric effects of combined gantry and leaf motion.

Sterpin et al. (2008) developed TomoPen, a PENELOPE user code specifically for helical tomotherapy simulation. First, a phase space file is created for each of the three jaw settings used during delivery. Then, for each sinogram entry, a projection-specific phase space file is created by adjusting particle weights. To model the continuous delivery, each sinogram entry is subdivided into 11 subprojections with linearly interpolated leaf openings. Weighting factors assume linear attenuation through MLC leaves, and only leaves that are open or adjacent to an open leaf are considered — leakage radiation through fully closed regions is not modeled. Comparisons with film and ionization chamber measurements showed agreement within 2% and 1 mm.

Zhao et al. (2008a) took a different path using EGSnrc/BEAMnrc with the SCS method. Each sinogram entry is subdivided into static MLC subfields, and the number of histories for each subprojection is proportional to the calculated leaf opening time. Phase spaces are rotated in the XY plane and the isocenter is modified along the Z direction to simulate helical delivery.

VMAT: Volumetric-Modulated Arc Therapy

VMAT delivers IMRT with continuous gantry rotation while dynamically modifying MLC apertures and modulating the dose rate. The delivery is specified by a sequence of control points defining gantry angle, MLC leaf positions, and cumulative MU. There is strong clinical interest in using MC simulations for patient-specific quality assurance of VMAT plans. However, Boylan et al. (2013) cautioned that the approximations made by the TPS to model VMAT delivery mean the plan file settings may not accurately represent how the plan will actually be delivered by the linac.

Li et al. (2001) described an SCS-based approach for Elekta VMAT using EGS4/BEAM, discretizing each arc into 5°–10° steps for static simulations. Bush et al. (2008) modeled Varian RapidArc delivery, addressing the significant leaf motion between control points by computing a mean gantry angle for each consecutive pair of positions and using the adjacent leaf openings as DMLC control points at that mean angle. Variable dose rate is accounted for by weighting dose by fractional MUs per segment. The same group used recorded log files to reconstruct delivered dose from RapidArc treatments (Teke et al., 2009).

Two PPS-based approaches were developed for BEAMnrc. Lobo and Popescu (2010) based theirs on ISOURCE 9 in DOSXYZnrc, modified so that the first time a particle enters any dynamic component module it receives a “time stamp” — a randomly sampled fractional MU index. This stamp synchronizes all dynamic components: jaws, MLC, gantry rotation, collimator and couch motion. ISOURCE 20 uses a phase space scored above the secondary collimators as input (useful when manufacturers provide a phase space file instead of a full head model, as with Varian TrueBeam), while ISOURCE 21 consists of a full accelerator model. Belec et al. (2011) developed a second PPS approach storing the MU index as a time variable in the phase space file, replacing the ZLAST variable. A later modification (Popescu and Lobo, 2013) created “4D” phase space files in the IAEA format.

Dynamic Patient Simulations: Modeling Respiratory Motion

The need for 4D patient dose calculation was driven by interest in compensating for respiratory motion effects during treatment planning and delivery (Keall et al. 2004). Bortfeld et al. (2004) identified three effects of motion on delivered dose: (1) blurring along the motion path, (2) localized spatial deformations at organ boundaries with density changes, and (3) interplay between tumor motion and dynamic beam delivery. Each of these effects demands different levels of simulation complexity.

Convolution-Based Methods

If the dose distribution is assumed shift-invariant, the effect of motion over many fractions can be estimated by convolving the dose distribution with a probability distribution describing positional variations (Lujan et al., 1999). This captures only the blurring effect — dose deformations and differential motion cannot be modeled by convolution. The shift-invariance assumption also breaks down at tissue interfaces, potentially underestimating dose at these locations (Craig et al. 2001; Chetty et al. 2004). Fluence convolution was proposed as an alternative (Beckham et al., 2002), modifying particle positions and direction cosines in the phase space file by randomly sampling shifts from a respiratory motion probability distribution. Though it overcomes the shift-invariance limitation, it still approximates the patient as undergoing rigid body motion.

Dose-Mapping Methods: Center-of-Mass and Interpolation

The limitation of convolution is that it does not consider the differential organ motion, deformation, and density changes that cause the dose distribution shape to locally deform during delivery. The SCS-like approach calculates dose distributions on different respiratory phases, weighted by the fraction of the breathing cycle for which they occur. Rosu et al. (2007) demonstrated no significant DVH differences between cumulative doses calculated from ten respiratory states versus only inhale and exhale states — though this conclusion depends on the treatment plan design.

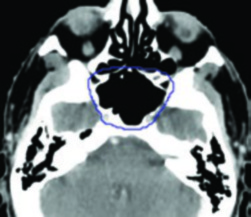

The simplest dose mapping approach, center-of-mass (COM) remapping, uses deformable image registration to determine which target geometry voxel corresponds to each reference geometry voxel. Paganetti et al. (2004) implemented COM in GEANT4 using the UpdateValue method to update patient geometry across 10 breathing phases from a 4D-CT dataset. Trilinear interpolation of dose from local neighboring voxels at the transformed COM point can improve accuracy, but Heath and Seuntjens (2006) showed that interpolation errors lead to incorrect dose calculations in regions of large dose and deformation gradients. Siebers and Zhong (2008) further demonstrated that when target geometry voxels merge in the reference geometry (compression), dose interpolation methods do not conserve integral dose.

Voxel Warping: Energy-Conserving MC Transport

Two MC approaches that ensure energy conservation were developed by Heath et al. (2007, 2011) and Siebers and Zhong (2008). The first method, voxel warping, deforms reference dose grid nodes using deformation vectors from image registration. Implemented in defDOSXYZnrc (Heath et al., 2007), particle transport occurs on the deformed voxel geometry. Since voxel indices do not change between reference and deformed states, energy is conserved. Densities of deformed voxels are adjusted in accordance with volume changes to conserve mass. Each deformed voxel face is subdivided into two subplanes, forming dodecahedrons, which doubles the number of distance-to-boundary calculations. The Jacobian determinant identifies transformation discontinuities:

$$\det J(N(x)) = \begin{vmatrix} \frac{\partial u_x}{\partial x} + 1 & \frac{\partial u_x}{\partial y} & \frac{\partial u_x}{\partial z} \\ \frac{\partial u_y}{\partial x} & \frac{\partial u_y}{\partial y} + 1 & \frac{\partial u_y}{\partial z} \\ \frac{\partial u_z}{\partial x} & \frac{\partial u_z}{\partial y} & \frac{\partial u_z}{\partial z} + 1 \end{vmatrix}$$

Where $u_x$, $u_y$, $u_z$ are the displacement field components. A negative determinant at a node indicates a discontinuity in the transformation — for example, at the lung-chest wall boundary where sliding occurs. These discontinuities can be removed by smoothing the transformation field either globally or locally.

The defDOSXYZnrc calculations run up to 10 times slower than standard DOSXYZnrc due to the additional boundary checking. The VMC++ reimplementation (Heath and Kawrakow, 2011) achieved a 130-fold improvement in computational efficiency using optimized tetrahedral geometry elements, dividing each deformed voxel into 6 tetrahedra. The main advantage of tetrahedral elements is eliminating the plane–particle intersection ambiguity while requiring fewer planes to check per element. Compared to rectilinear VMC++ calculations, the deformable geometry increases computation time by only a factor of 2 for the same statistical variance.

Energy-Mapping Methods

Siebers and Zhong (2008) proposed an alternative where particles are transported in the rectilinear target geometry while energy deposition points are mapped to the reference dose grid using deformation vectors. The energy deposition location is randomly sampled along the particle step, resulting in only 10%–50% additional computation time (termed etmDOSXYZnrc). However, if the transformation between reference and target geometry is inexact — as is always the case with real patient images due to artifacts, noise, and partial volume effects — the mapped energy will be inconsistent with the reference voxel masses, producing discontinuous dose distributions.

Zhong and Siebers (2009) addressed this with the Energy and Mass Congruent Mapping (EMCM) method, which maps both energy and mass consistently. Target voxels are subdivided into 100 subvoxels whose masses are mapped using the same deformation vectors as the energy deposition points, achieving 99.95% mass mapping precision. Heath et al. (2011) proposed an alternative volume-overlap energy-mapping approach: each reference voxel is subdivided into tetrahedra, deformed using displacement vectors, and the volume intersected by each tetrahedron and the target voxels determines the mapped energy. Mean differences between EMCM and trilinear dose interpolation were 7% of maximum dose.

Interplay Effects: When Beam and Patient Move Together

The interplay effect — the interaction between dynamic MLC leaf motion and tumor movement — is a central concern in intensity-modulated photon and proton therapy. Yu et al. (1998) demonstrated that with DMLC delivery, intrafraction motion causes large errors in the locally delivered photon dose per fraction due to motion in the beam penumbra region. The magnitude of these dose variations depends strongly on the speed of the beam aperture relative to the speed of target motion and on the width of the scanning beam relative to motion amplitude.

For proton therapy, interplay effects are compounded by additional factors: (1) patient breathing, (2) proton energy change times, (3) motion amplitude, and (4) the rescanning methodology used (Seco et al., 2009a). These factors can produce clinically significant dose inhomogeneities within the target volume.

The SCS method can study interplay by assigning each beam aperture to a specific breathing phase. Litzenberg et al. (2007) developed the “synchronized dynamic delivery” approach, combining MLC log file tracking (from Dynalog files) with real-time target volume position from wireless electromagnetic transponders (Calypso), which provide position updates 10 times per second. Each particle in the MC simulation is transported through a selected MLC segment and into a specific breathing phase of the dose cube. Although demonstrated in phantoms, this approach requires all breathing phase dose cubes to reside in CPU memory, which becomes demanding for large patient CT datasets.

To overcome the limitation of using multiple CT data cubes, Gholampourkashi et al. (2017) combined ISOURCE 21 with the voxel warping method in 4DdefDOSXYZnrc. For each incident particle, the MU index that samples dynamic collimator settings also determines the fractional breathing amplitude from a normalized respiratory trace. This amplitude scales the deformation vectors applied to the patient geometry mesh, recreating patient anatomy at that time point. Simulations of VMAT delivery were validated against film and MOSFET dose measurements in a custom-built programmable deformable lung phantom (Gholampourkashi et al. 2020). For more on how Monte Carlo handles electron beam calculations in clinical settings, see our article on patient dose calculation with Monte Carlo.

Proton Beam Dynamic Simulations

Before scanning delivery systems for proton beams, temporal modulation used spinning range modulator wheels to create a spread-out Bragg peak. Palmans and Verhaegen (1998) reported what is perhaps the first published dynamic MC simulation, implementing the PPS method in the PTRAN MC code to simulate a range modulator wheel.

Paganetti (2004) described a detailed model of the Northwest Proton Therapy Center beam line using GEANT4. Dynamic elements — range modulator wheel and beam scanning magnets — were modeled by exploiting GEANT4’s ability to update geometry parameter values during simulation. Geometry updates were performed in a linear time fashion with each time step assigned a number of histories. The modulator wheel rotation was simulated in 0.5° steps, while scanning magnet settings were updated in steps of 0.02 T. Calculation time was virtually unaffected by the number of geometry updates, as this involved only updating a pointer in memory.

Shin et al. (2012) described a framework for time-dependent geometries in the GEANT4-based proton MC tool TOPAS (Perl et al. 2012). Time-dependent parameter values are specified by assigning a Time Feature, with time evolution sampled in either sequential or random fashion defined by the Sequence. For more on Monte Carlo for ion beams, see our article on Monte Carlo for ion beams in radiotherapy.

Novel and Future Applications

The 4D MC methods summarized here can simulate deliveries with far more degrees of freedom than conventional linacs allow. Such deliveries are already possible with Developer Mode TrueBeam linacs (Varian), CyberKnife, and Vero-SBRT systems (BrainLab and Mitsubishi Heavy Industries) — but no commercial TPS can currently calculate dose distributions corresponding to beam trajectories of such complexity. 4D MC simulations have been shown capable of continuously modeling variable beam configurations and complex treatment geometry and kinematics with respect to the patient, giving these tools an important role in both verification and planning.

Teke et al. (2013) developed a BEAMnrc-based MC tool using ISOURCE 20 to simulate dose delivery based on the XML file encoding delivery instructions, demonstrating accurate simulation of deliveries with continuous collimator rotation, couch translation, and couch rotation. Popescu et al. (2015) performed VMAT simulations using ISOURCE 20 to score 4D phase spaces on patient CT phantom boundaries, demonstrating the ability to calculate incremental or cumulative dose distributions in the EPID simultaneous with patient dose calculation. Podesta et al. (2016) were the first to develop an MC technique to generate dose-rate information, studying temporal aspects of FFF (flattening-filter-free) beam delivery.

Comparison of Dynamic MC Simulation Approaches

| Approach | Method | Best Application | Efficiency vs. Standard MC | Main Limitation |

|---|---|---|---|---|

| Particle Weighting | Ray tracing + weight factors | Fast approximate IMRT calculations | High (minimal overhead) | Non-uniform weights increase variance |

| SCS | Multiple discrete static simulations | Step-and-shoot IMRT | Moderate (I/O overhead) | File management for many segments |

| PPS | Random sampling from CPDFs | VMAT, tomotherapy, continuous deliveries | Moderate to high | CPDF construction complexity |

| VCU-MLC | Single Compton + 100-sample averaging | IMRT patient dose calculations | High (fewer source particles needed) | Simplified MLC transport physics |

| defDOSXYZnrc | Deformed dodecahedron voxels | 4D patient simulations | ~10x slower than DOSXYZnrc | Requires continuous deformation field |

| VMC++ deformable | Tetrahedral deformed geometry | 4D patient simulations (fast) | Only 2x slower than rectilinear VMC++ | Tetrahedral mesh generation |

| EMCM | Energy and mass congruent mapping | Dose accumulation with mass conservation | 10%–50% overhead vs. DOSXYZnrc | 100-subvoxel subdivision per voxel |

| 4DdefDOSXYZnrc | ISOURCE 21 + voxel warping | Full 4D interplay studies | Variable | Full linac model required |

Source: Monte Carlo Techniques in Radiation Therapy (2nd ed., CRC Press, 2022)

The future points toward time-dependent treatment planning and delivery verification using MC as a unified framework — one that simultaneously models the beam, the patient anatomy, and their temporal interaction. As novel delivery platforms increase the available degrees of freedom, MC remains the only dose calculation engine with the flexibility and accuracy to keep pace. For perspectives on AI-driven acceleration of these calculations, see our article on AI and the future of Monte Carlo in radiotherapy.