Radiologists and AI Both Struggle to Spot Synthetic X-Rays

A peer-reviewed study published in Radiology, led by investigators at the Icahn School of Medicine at Mount Sinai in New York, reports that both radiologists and advanced artificial intelligence models struggle to reliably distinguish between authentic and AI-generated X-ray images. The findings raise serious concerns about clinical integrity and cybersecurity in diagnostic imaging environments.

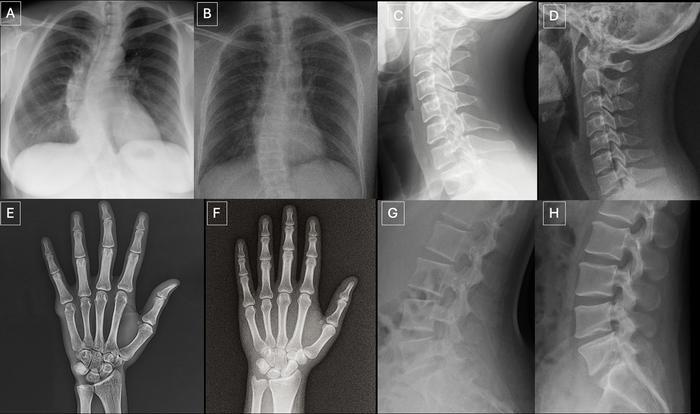

The research evaluated 17 radiologists from 12 centers across six countries who reviewed 264 images, half of which were synthetic. The dataset included images generated by ChatGPT-based systems as well as RoentGen, a diffusion model developed by Stanford Medicine.

Only 41% of Radiologists Spotted Fakes Without Warning

When radiologists were not informed that synthetic images were included, only 41% identified them without prompting. After disclosure, their average accuracy rose to 75%, with individual performance ranging from 58% to 92%. Notably, experience level did not correlate with detection accuracy, although musculoskeletal subspecialists performed better than other groups.

This result is concerning because it suggests that in routine clinical practice — where there is no expectation that images might be fabricated — detection rates would be extremely low. Most radiologists simply would not expect to encounter a synthetic image in their PACS.

AI Models Also Failed at Detection

The multimodal large language models evaluated — GPT-4o, GPT-5, Gemini 2.5 Pro, and Llama 4 Maverick — achieved detection rates between 57% and 85%, with variability comparable to human radiologists. Most alarmingly, even the model used to generate some of the images was unable to consistently identify them. This indicates that generation technology has already outpaced the detection capabilities of the generators themselves.

This connects directly to earlier findings about AI detecting AI-generated radiology reports — if text-based reports already present authenticity challenges, diagnostic images represent an even greater risk.

Clinical and Legal Risks

Lead author Dr. Mickael Tordjman, a postdoctoral fellow at Mount Sinai, warned of potential misuse. “This creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one,” he said. He also cautioned about cybersecurity risks if manipulated images were introduced into clinical systems.

Risk scenarios include:

- Insurance fraud: synthetic images of nonexistent injuries for fraudulent reimbursement

- Fraudulent medical litigation: fabrication of radiological evidence of medical errors

- Clinical sabotage: insertion of fake images into medical records to compromise diagnoses

- Clinical trial manipulation: contamination of research datasets with synthetic data

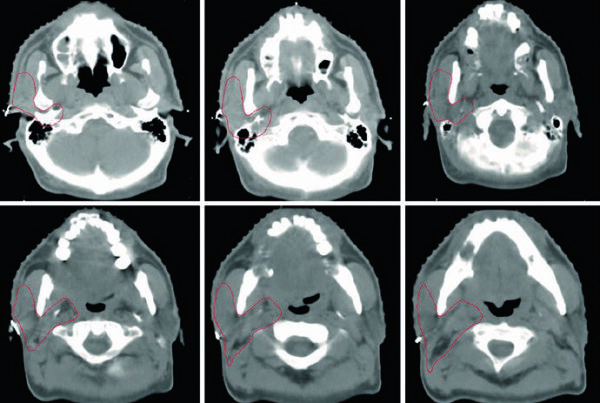

Visual Patterns and Proposed Safeguards

The study identified recurring visual patterns in synthetic images: overly smooth bones, symmetrical lung fields, and unusually uniform vascular structures. While useful as clues, these artifacts are likely to diminish as generative models evolve.

The authors recommend technical safeguards such as embedded watermarks and cryptographic signatures at the point of image capture — essentially ensuring that each image carries a proof of origin that cannot be forged. They also call for expanded training datasets and specialized detection tools as generative models advance toward more complex modalities like CT and MR.

Implications for PACS and Imaging Workflows

For imaging system administrators and medical informatics specialists, the study reinforces the importance of image authentication protocols integrated into PACS. Mechanisms such as DICOM Digital Signatures and blockchain-based image traceability, still sparsely adopted, gain relevance in light of the concrete threat of radiological deepfakes. The radiology resource landscape for 2026 should incorporate authenticity verification tools as an essential component.

Outlook: A Digital Arms Race

The study suggests we are at the beginning of an arms race between generation and detection of synthetic medical images. As diffusion models and multimodal LLMs become more sophisticated, the ability to create radiological images indistinguishable from real ones will only increase. The response will need to combine technical solutions (watermarks, cryptography), regulatory measures (mandatory authentication standards), and education (training radiologists to recognize synthetic artifacts).

Source: DOTmed Healthcare Business News